html_url stringlengths 48 51 | title stringlengths 5 268 | comments stringlengths 63 51.8k | body stringlengths 0 36.2k ⌀ | comment_length int64 16 1.52k | text stringlengths 164 54.1k | embeddings list |

|---|---|---|---|---|---|---|

https://github.com/huggingface/datasets/issues/633 | Load large text file for LM pre-training resulting in OOM | This seems to be on the `transformers` library side.

If you have more informations (pip env) or even better, a colab reproducing the error we can investigate. | I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(DataCollatorForLanguageModeling):

"""

Data collator u... | 27 | Load large text file for LM pre-training resulting in OOM

I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(Dat... | [

-0.6339287758,

-0.4775367975,

0.0106936339,

0.2986355424,

0.3600475788,

-0.1518250853,

0.5567325354,

0.3738059103,

0.0108824177,

0.0107197072,

-0.1295929253,

-0.18279998,

-0.2669847012,

-0.1620898843,

-0.0323720984,

0.0232064184,

-0.0998212174,

0.1968754083,

-0.2772670686,

-0.0... |

https://github.com/huggingface/datasets/issues/633 | Load large text file for LM pre-training resulting in OOM | It seems like it's solved with freshed versions of transformers. I have tried to replicate the error doing a fresh pip install transformers & datasets on colab and the error doesn't continue. On colab it keeps stable on 5GB! (Y)

Edit: **Thanks for your great work**. Have a good day. | I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(DataCollatorForLanguageModeling):

"""

Data collator u... | 50 | Load large text file for LM pre-training resulting in OOM

I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(Dat... | [

-0.6339287758,

-0.4775367975,

0.0106936339,

0.2986355424,

0.3600475788,

-0.1518250853,

0.5567325354,

0.3738059103,

0.0108824177,

0.0107197072,

-0.1295929253,

-0.18279998,

-0.2669847012,

-0.1620898843,

-0.0323720984,

0.0232064184,

-0.0998212174,

0.1968754083,

-0.2772670686,

-0.0... |

https://github.com/huggingface/datasets/issues/633 | Load large text file for LM pre-training resulting in OOM | @gaceladri witch version transformers and datasets are you using now? I want to try again. Thanks. | I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(DataCollatorForLanguageModeling):

"""

Data collator u... | 16 | Load large text file for LM pre-training resulting in OOM

I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(Dat... | [

-0.6339287758,

-0.4775367975,

0.0106936339,

0.2986355424,

0.3600475788,

-0.1518250853,

0.5567325354,

0.3738059103,

0.0108824177,

0.0107197072,

-0.1295929253,

-0.18279998,

-0.2669847012,

-0.1620898843,

-0.0323720984,

0.0232064184,

-0.0998212174,

0.1968754083,

-0.2772670686,

-0.0... |

https://github.com/huggingface/datasets/issues/633 | Load large text file for LM pre-training resulting in OOM | It's happening to me again. After 4 hours of pre-training, my ram memory gets full and the kernel dies. I am using the last transformers version as today. 4.4.0 and the last version of datasets 1.2.1, both installed from master. The memory consumption keeps increasing. | I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(DataCollatorForLanguageModeling):

"""

Data collator u... | 45 | Load large text file for LM pre-training resulting in OOM

I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(Dat... | [

-0.6339287758,

-0.4775367975,

0.0106936339,

0.2986355424,

0.3600475788,

-0.1518250853,

0.5567325354,

0.3738059103,

0.0108824177,

0.0107197072,

-0.1295929253,

-0.18279998,

-0.2669847012,

-0.1620898843,

-0.0323720984,

0.0232064184,

-0.0998212174,

0.1968754083,

-0.2772670686,

-0.0... |

https://github.com/huggingface/datasets/issues/633 | Load large text file for LM pre-training resulting in OOM | Thanks for the investigation @gaceladri

Apparently this happens when `num_workers>0` and has to do with objects being copied-on-write.

Did you try setting num_workers to 0 @gaceladri ?

If the issue doesn't happen with `num_workers=0` then this would confirm that it's indeed related to this python/pytorch issue.

... | I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(DataCollatorForLanguageModeling):

"""

Data collator u... | 114 | Load large text file for LM pre-training resulting in OOM

I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(Dat... | [

-0.6339287758,

-0.4775367975,

0.0106936339,

0.2986355424,

0.3600475788,

-0.1518250853,

0.5567325354,

0.3738059103,

0.0108824177,

0.0107197072,

-0.1295929253,

-0.18279998,

-0.2669847012,

-0.1620898843,

-0.0323720984,

0.0232064184,

-0.0998212174,

0.1968754083,

-0.2772670686,

-0.0... |

https://github.com/huggingface/datasets/issues/633 | Load large text file for LM pre-training resulting in OOM | Hmmm so this might come from another issue...

Since it doesn't seem to be related to multiprocessing it should be easier to investigate though.

Do you have some ideas @gaceladri ? | I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(DataCollatorForLanguageModeling):

"""

Data collator u... | 31 | Load large text file for LM pre-training resulting in OOM

I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(Dat... | [

-0.6339287758,

-0.4775367975,

0.0106936339,

0.2986355424,

0.3600475788,

-0.1518250853,

0.5567325354,

0.3738059103,

0.0108824177,

0.0107197072,

-0.1295929253,

-0.18279998,

-0.2669847012,

-0.1620898843,

-0.0323720984,

0.0232064184,

-0.0998212174,

0.1968754083,

-0.2772670686,

-0.0... |

https://github.com/huggingface/datasets/issues/633 | Load large text file for LM pre-training resulting in OOM | @lhoestq I looked quickly to a previously spoted bug in my env wandb /sdk/interface/interface.py, because sometimes when I load the dataset I got a multiprocessing error at line 510 in wandb...interface.py

This bug is reported here https://github.com/huggingface/datasets/issues/847

```

--------------------------... | I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(DataCollatorForLanguageModeling):

"""

Data collator u... | 396 | Load large text file for LM pre-training resulting in OOM

I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(Dat... | [

-0.6339287758,

-0.4775367975,

0.0106936339,

0.2986355424,

0.3600475788,

-0.1518250853,

0.5567325354,

0.3738059103,

0.0108824177,

0.0107197072,

-0.1295929253,

-0.18279998,

-0.2669847012,

-0.1620898843,

-0.0323720984,

0.0232064184,

-0.0998212174,

0.1968754083,

-0.2772670686,

-0.0... |

https://github.com/huggingface/datasets/issues/633 | Load large text file for LM pre-training resulting in OOM | @lhoestq But despite this, I got lost into the [class Dataset()](https://huggingface.co/docs/datasets/_modules/datasets/arrow_dataset.html#Dataset) reading the pyarrow files.

Edit: but you should be rigth, that it does not have to be related to multiprocessing since it keeps happening when `num_workers=0` | I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(DataCollatorForLanguageModeling):

"""

Data collator u... | 37 | Load large text file for LM pre-training resulting in OOM

I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(Dat... | [

-0.6339287758,

-0.4775367975,

0.0106936339,

0.2986355424,

0.3600475788,

-0.1518250853,

0.5567325354,

0.3738059103,

0.0108824177,

0.0107197072,

-0.1295929253,

-0.18279998,

-0.2669847012,

-0.1620898843,

-0.0323720984,

0.0232064184,

-0.0998212174,

0.1968754083,

-0.2772670686,

-0.0... |

https://github.com/huggingface/datasets/issues/633 | Load large text file for LM pre-training resulting in OOM | Or maybe wandb uses multiprocessing ? One process for wandb logging and one for actual training ? If this is the case then even setting `num_workers=0` would cause the process to be forked for wandb and therefore cause the memory issue. | I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(DataCollatorForLanguageModeling):

"""

Data collator u... | 41 | Load large text file for LM pre-training resulting in OOM

I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(Dat... | [

-0.6339287758,

-0.4775367975,

0.0106936339,

0.2986355424,

0.3600475788,

-0.1518250853,

0.5567325354,

0.3738059103,

0.0108824177,

0.0107197072,

-0.1295929253,

-0.18279998,

-0.2669847012,

-0.1620898843,

-0.0323720984,

0.0232064184,

-0.0998212174,

0.1968754083,

-0.2772670686,

-0.0... |

https://github.com/huggingface/datasets/issues/633 | Load large text file for LM pre-training resulting in OOM | @lhoestq could be, but if we set wandb to false this should not happen. I am going to try. | I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(DataCollatorForLanguageModeling):

"""

Data collator u... | 19 | Load large text file for LM pre-training resulting in OOM

I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(Dat... | [

-0.6339287758,

-0.4775367975,

0.0106936339,

0.2986355424,

0.3600475788,

-0.1518250853,

0.5567325354,

0.3738059103,

0.0108824177,

0.0107197072,

-0.1295929253,

-0.18279998,

-0.2669847012,

-0.1620898843,

-0.0323720984,

0.0232064184,

-0.0998212174,

0.1968754083,

-0.2772670686,

-0.0... |

https://github.com/huggingface/datasets/issues/633 | Load large text file for LM pre-training resulting in OOM | @lhoestq It keeps happening. I have uninstalled wandb from my env, setted `%env WANDB_DISABLED=true` on my notebook, and commented this func:

```

def get_available_reporting_integrations():

integrations = []

if is_azureml_available():

integrations.append("azure_ml")

if is_comet_available():

... | I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(DataCollatorForLanguageModeling):

"""

Data collator u... | 65 | Load large text file for LM pre-training resulting in OOM

I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(Dat... | [

-0.6339287758,

-0.4775367975,

0.0106936339,

0.2986355424,

0.3600475788,

-0.1518250853,

0.5567325354,

0.3738059103,

0.0108824177,

0.0107197072,

-0.1295929253,

-0.18279998,

-0.2669847012,

-0.1620898843,

-0.0323720984,

0.0232064184,

-0.0998212174,

0.1968754083,

-0.2772670686,

-0.0... |

https://github.com/huggingface/datasets/issues/633 | Load large text file for LM pre-training resulting in OOM | Thanks for checking @gaceladri . Let's investigate the single process setting then.

If you have some sort of colab notebook with a minimal code example that shows this behavior feel free to share it @gaceladri so that we can play around with it to find what causes this. Otherwise I'll probably try to reproduce on my s... | I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(DataCollatorForLanguageModeling):

"""

Data collator u... | 60 | Load large text file for LM pre-training resulting in OOM

I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(Dat... | [

-0.6339287758,

-0.4775367975,

0.0106936339,

0.2986355424,

0.3600475788,

-0.1518250853,

0.5567325354,

0.3738059103,

0.0108824177,

0.0107197072,

-0.1295929253,

-0.18279998,

-0.2669847012,

-0.1620898843,

-0.0323720984,

0.0232064184,

-0.0998212174,

0.1968754083,

-0.2772670686,

-0.0... |

https://github.com/huggingface/datasets/issues/633 | Load large text file for LM pre-training resulting in OOM | @lhoestq sure. Here you have https://colab.research.google.com/drive/1ba09ZOpyHGAOQLcsxiQAHRXl10qnMU5o?usp=sharing let me know if the link works and it reproduces the issue. To me, it reproduces the issue, since if you start the training the ram memory keeps increasing.

Let me know. Thanks! | I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(DataCollatorForLanguageModeling):

"""

Data collator u... | 39 | Load large text file for LM pre-training resulting in OOM

I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(Dat... | [

-0.6339287758,

-0.4775367975,

0.0106936339,

0.2986355424,

0.3600475788,

-0.1518250853,

0.5567325354,

0.3738059103,

0.0108824177,

0.0107197072,

-0.1295929253,

-0.18279998,

-0.2669847012,

-0.1620898843,

-0.0323720984,

0.0232064184,

-0.0998212174,

0.1968754083,

-0.2772670686,

-0.0... |

https://github.com/huggingface/datasets/issues/633 | Load large text file for LM pre-training resulting in OOM | Could the bug be comming from tokenizers?

I got this warning at the terminal from my jupyter notebook:

```

huggingface/tokenizers: The current process just got forked, after parallelism has already been used. Disabling parallelism to avoid deadlocks...

To disable this warning, you can either:

- Avoid using `to... | I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(DataCollatorForLanguageModeling):

"""

Data collator u... | 63 | Load large text file for LM pre-training resulting in OOM

I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(Dat... | [

-0.6339287758,

-0.4775367975,

0.0106936339,

0.2986355424,

0.3600475788,

-0.1518250853,

0.5567325354,

0.3738059103,

0.0108824177,

0.0107197072,

-0.1295929253,

-0.18279998,

-0.2669847012,

-0.1620898843,

-0.0323720984,

0.0232064184,

-0.0998212174,

0.1968754083,

-0.2772670686,

-0.0... |

https://github.com/huggingface/datasets/issues/633 | Load large text file for LM pre-training resulting in OOM | I've never experienced memory issues with tokenizers so I don't know

Cc @n1t0 are you aware of any issue that would cause memory to keep increasing when the tokenizer is used in the Data Collator for language modeling ? | I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(DataCollatorForLanguageModeling):

"""

Data collator u... | 39 | Load large text file for LM pre-training resulting in OOM

I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(Dat... | [

-0.6339287758,

-0.4775367975,

0.0106936339,

0.2986355424,

0.3600475788,

-0.1518250853,

0.5567325354,

0.3738059103,

0.0108824177,

0.0107197072,

-0.1295929253,

-0.18279998,

-0.2669847012,

-0.1620898843,

-0.0323720984,

0.0232064184,

-0.0998212174,

0.1968754083,

-0.2772670686,

-0.0... |

https://github.com/huggingface/datasets/issues/633 | Load large text file for LM pre-training resulting in OOM | @lhoestq Thanks for pointing to n1t0, just to clarify. That warning was doing fine-tuning, without collator:

```

from datasets import load_dataset, load_metric

import numpy as np

GLUE_TASKS = [

"cola",

"mnli",

"mnli-mm",

"mrpc",

"qnli",

"qqp",

"rte",

"sst2",

"stsb",

... | I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(DataCollatorForLanguageModeling):

"""

Data collator u... | 468 | Load large text file for LM pre-training resulting in OOM

I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(Dat... | [

-0.6339287758,

-0.4775367975,

0.0106936339,

0.2986355424,

0.3600475788,

-0.1518250853,

0.5567325354,

0.3738059103,

0.0108824177,

0.0107197072,

-0.1295929253,

-0.18279998,

-0.2669847012,

-0.1620898843,

-0.0323720984,

0.0232064184,

-0.0998212174,

0.1968754083,

-0.2772670686,

-0.0... |

https://github.com/huggingface/datasets/issues/633 | Load large text file for LM pre-training resulting in OOM | Thanks for sharing your results.

So you still had the issue for fine-tuning ?

And the issue still appears with a bare-bone dataset from an arrow file... | I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(DataCollatorForLanguageModeling):

"""

Data collator u... | 27 | Load large text file for LM pre-training resulting in OOM

I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(Dat... | [

-0.6339287758,

-0.4775367975,

0.0106936339,

0.2986355424,

0.3600475788,

-0.1518250853,

0.5567325354,

0.3738059103,

0.0108824177,

0.0107197072,

-0.1295929253,

-0.18279998,

-0.2669847012,

-0.1620898843,

-0.0323720984,

0.0232064184,

-0.0998212174,

0.1968754083,

-0.2772670686,

-0.0... |

https://github.com/huggingface/datasets/issues/633 | Load large text file for LM pre-training resulting in OOM | Yes, on both cases. Fine-tuning a pre-trained model and pre-training from scratch with a local arrow file already pre-processed. | I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(DataCollatorForLanguageModeling):

"""

Data collator u... | 19 | Load large text file for LM pre-training resulting in OOM

I tried to pretrain Longformer using transformers and datasets. But I got OOM issues with loading a large text file. My script is almost like this:

```python

from datasets import load_dataset

@dataclass

class DataCollatorForDatasetsLanguageModeling(Dat... | [

-0.6339287758,

-0.4775367975,

0.0106936339,

0.2986355424,

0.3600475788,

-0.1518250853,

0.5567325354,

0.3738059103,

0.0108824177,

0.0107197072,

-0.1295929253,

-0.18279998,

-0.2669847012,

-0.1620898843,

-0.0323720984,

0.0232064184,

-0.0998212174,

0.1968754083,

-0.2772670686,

-0.0... |

https://github.com/huggingface/datasets/issues/630 | Text dataset not working with large files | Basically ~600MB txt files(UTF-8) * 59.

contents like ```안녕하세요, 이것은 예제로 한번 말해보는 텍스트입니다. 그냥 이렇다고요.<|endoftext|>\n```

Also, it gets stuck for a loooong time at ```Testing the mapped function outputs```, for more than 12 hours(currently ongoing) | ```

Traceback (most recent call last):

File "examples/language-modeling/run_language_modeling.py", line 333, in <module>

main()

File "examples/language-modeling/run_language_modeling.py", line 262, in main

get_dataset(data_args, tokenizer=tokenizer, cache_dir=model_args.cache_dir) if training_args.do_t... | 36 | Text dataset not working with large files

```

Traceback (most recent call last):

File "examples/language-modeling/run_language_modeling.py", line 333, in <module>

main()

File "examples/language-modeling/run_language_modeling.py", line 262, in main

get_dataset(data_args, tokenizer=tokenizer, cache_dir... | [

-0.4925668538,

-0.2310243845,

-0.119864285,

0.2836015522,

0.4663561881,

-0.0735015199,

0.3053680658,

0.5961021781,

-0.1138257012,

0.0461648479,

-0.0624196492,

-0.030405255,

-0.1033420116,

0.3101792932,

-0.106213443,

-0.0389503799,

-0.2278844565,

0.1130641326,

-0.0946917832,

0.0... |

https://github.com/huggingface/datasets/issues/630 | Text dataset not working with large files | It gets stuck while doing `.map()` ? Are you using multiprocessing ?

If you could provide a code snippet it could be very useful | ```

Traceback (most recent call last):

File "examples/language-modeling/run_language_modeling.py", line 333, in <module>

main()

File "examples/language-modeling/run_language_modeling.py", line 262, in main

get_dataset(data_args, tokenizer=tokenizer, cache_dir=model_args.cache_dir) if training_args.do_t... | 24 | Text dataset not working with large files

```

Traceback (most recent call last):

File "examples/language-modeling/run_language_modeling.py", line 333, in <module>

main()

File "examples/language-modeling/run_language_modeling.py", line 262, in main

get_dataset(data_args, tokenizer=tokenizer, cache_dir... | [

-0.4925668538,

-0.2310243845,

-0.119864285,

0.2836015522,

0.4663561881,

-0.0735015199,

0.3053680658,

0.5961021781,

-0.1138257012,

0.0461648479,

-0.0624196492,

-0.030405255,

-0.1033420116,

0.3101792932,

-0.106213443,

-0.0389503799,

-0.2278844565,

0.1130641326,

-0.0946917832,

0.0... |

https://github.com/huggingface/datasets/issues/630 | Text dataset not working with large files | From transformers/examples/language-modeling/run-language-modeling.py :

```

def get_dataset(

args: DataTrainingArguments,

tokenizer: PreTrainedTokenizer,

evaluate: bool = False,

cache_dir: Optional[str] = None,

):

file_path = args.eval_data_file if evaluate else args.train_data_file

if ... | ```

Traceback (most recent call last):

File "examples/language-modeling/run_language_modeling.py", line 333, in <module>

main()

File "examples/language-modeling/run_language_modeling.py", line 262, in main

get_dataset(data_args, tokenizer=tokenizer, cache_dir=model_args.cache_dir) if training_args.do_t... | 71 | Text dataset not working with large files

```

Traceback (most recent call last):

File "examples/language-modeling/run_language_modeling.py", line 333, in <module>

main()

File "examples/language-modeling/run_language_modeling.py", line 262, in main

get_dataset(data_args, tokenizer=tokenizer, cache_dir... | [

-0.4925668538,

-0.2310243845,

-0.119864285,

0.2836015522,

0.4663561881,

-0.0735015199,

0.3053680658,

0.5961021781,

-0.1138257012,

0.0461648479,

-0.0624196492,

-0.030405255,

-0.1033420116,

0.3101792932,

-0.106213443,

-0.0389503799,

-0.2278844565,

0.1130641326,

-0.0946917832,

0.0... |

https://github.com/huggingface/datasets/issues/630 | Text dataset not working with large files | I am not able to reproduce on my side :/

Could you send the version of `datasets` and `pyarrow` you're using ?

Could you try to update the lib and try again ?

Or do you think you could try to reproduce it on google colab ? | ```

Traceback (most recent call last):

File "examples/language-modeling/run_language_modeling.py", line 333, in <module>

main()

File "examples/language-modeling/run_language_modeling.py", line 262, in main

get_dataset(data_args, tokenizer=tokenizer, cache_dir=model_args.cache_dir) if training_args.do_t... | 47 | Text dataset not working with large files

```

Traceback (most recent call last):

File "examples/language-modeling/run_language_modeling.py", line 333, in <module>

main()

File "examples/language-modeling/run_language_modeling.py", line 262, in main

get_dataset(data_args, tokenizer=tokenizer, cache_dir... | [

-0.4925668538,

-0.2310243845,

-0.119864285,

0.2836015522,

0.4663561881,

-0.0735015199,

0.3053680658,

0.5961021781,

-0.1138257012,

0.0461648479,

-0.0624196492,

-0.030405255,

-0.1033420116,

0.3101792932,

-0.106213443,

-0.0389503799,

-0.2278844565,

0.1130641326,

-0.0946917832,

0.0... |

https://github.com/huggingface/datasets/issues/630 | Text dataset not working with large files | Huh, weird. It's fixed on my side too.

But now ```Caching processed dataset``` is taking forever - how can I disable it? Any flags? | ```

Traceback (most recent call last):

File "examples/language-modeling/run_language_modeling.py", line 333, in <module>

main()

File "examples/language-modeling/run_language_modeling.py", line 262, in main

get_dataset(data_args, tokenizer=tokenizer, cache_dir=model_args.cache_dir) if training_args.do_t... | 24 | Text dataset not working with large files

```

Traceback (most recent call last):

File "examples/language-modeling/run_language_modeling.py", line 333, in <module>

main()

File "examples/language-modeling/run_language_modeling.py", line 262, in main

get_dataset(data_args, tokenizer=tokenizer, cache_dir... | [

-0.4925668538,

-0.2310243845,

-0.119864285,

0.2836015522,

0.4663561881,

-0.0735015199,

0.3053680658,

0.5961021781,

-0.1138257012,

0.0461648479,

-0.0624196492,

-0.030405255,

-0.1033420116,

0.3101792932,

-0.106213443,

-0.0389503799,

-0.2278844565,

0.1130641326,

-0.0946917832,

0.0... |

https://github.com/huggingface/datasets/issues/630 | Text dataset not working with large files | Right after `Caching processed dataset`, your function is applied to the dataset and there's a progress bar that shows how much time is left. How much time does it take for you ?

Also caching isn't supposed to slow down your processing. But if you still want to disable it you can do `.map(..., load_from_cache_file=F... | ```

Traceback (most recent call last):

File "examples/language-modeling/run_language_modeling.py", line 333, in <module>

main()

File "examples/language-modeling/run_language_modeling.py", line 262, in main

get_dataset(data_args, tokenizer=tokenizer, cache_dir=model_args.cache_dir) if training_args.do_t... | 55 | Text dataset not working with large files

```

Traceback (most recent call last):

File "examples/language-modeling/run_language_modeling.py", line 333, in <module>

main()

File "examples/language-modeling/run_language_modeling.py", line 262, in main

get_dataset(data_args, tokenizer=tokenizer, cache_dir... | [

-0.4925668538,

-0.2310243845,

-0.119864285,

0.2836015522,

0.4663561881,

-0.0735015199,

0.3053680658,

0.5961021781,

-0.1138257012,

0.0461648479,

-0.0624196492,

-0.030405255,

-0.1033420116,

0.3101792932,

-0.106213443,

-0.0389503799,

-0.2278844565,

0.1130641326,

-0.0946917832,

0.0... |

https://github.com/huggingface/datasets/issues/630 | Text dataset not working with large files | Ah, it’s much faster now(Takes around 15~20min).

BTW, any way to set default tensor output as plain tensors with distributed training? The ragged tensors are incompatible with tpustrategy :( | ```

Traceback (most recent call last):

File "examples/language-modeling/run_language_modeling.py", line 333, in <module>

main()

File "examples/language-modeling/run_language_modeling.py", line 262, in main

get_dataset(data_args, tokenizer=tokenizer, cache_dir=model_args.cache_dir) if training_args.do_t... | 29 | Text dataset not working with large files

```

Traceback (most recent call last):

File "examples/language-modeling/run_language_modeling.py", line 333, in <module>

main()

File "examples/language-modeling/run_language_modeling.py", line 262, in main

get_dataset(data_args, tokenizer=tokenizer, cache_dir... | [

-0.4925668538,

-0.2310243845,

-0.119864285,

0.2836015522,

0.4663561881,

-0.0735015199,

0.3053680658,

0.5961021781,

-0.1138257012,

0.0461648479,

-0.0624196492,

-0.030405255,

-0.1033420116,

0.3101792932,

-0.106213443,

-0.0389503799,

-0.2278844565,

0.1130641326,

-0.0946917832,

0.0... |

https://github.com/huggingface/datasets/issues/630 | Text dataset not working with large files | > Ah, it’s much faster now(Takes around 15~20min).

Glad to see that it's faster now. What did you change exactly ?

> BTW, any way to set default tensor output as plain tensors with distributed training? The ragged tensors are incompatible with tpustrategy :(

Oh I didn't know about that. Feel free to open an is... | ```

Traceback (most recent call last):

File "examples/language-modeling/run_language_modeling.py", line 333, in <module>

main()

File "examples/language-modeling/run_language_modeling.py", line 262, in main

get_dataset(data_args, tokenizer=tokenizer, cache_dir=model_args.cache_dir) if training_args.do_t... | 92 | Text dataset not working with large files

```

Traceback (most recent call last):

File "examples/language-modeling/run_language_modeling.py", line 333, in <module>

main()

File "examples/language-modeling/run_language_modeling.py", line 262, in main

get_dataset(data_args, tokenizer=tokenizer, cache_dir... | [

-0.4925668538,

-0.2310243845,

-0.119864285,

0.2836015522,

0.4663561881,

-0.0735015199,

0.3053680658,

0.5961021781,

-0.1138257012,

0.0461648479,

-0.0624196492,

-0.030405255,

-0.1033420116,

0.3101792932,

-0.106213443,

-0.0389503799,

-0.2278844565,

0.1130641326,

-0.0946917832,

0.0... |

https://github.com/huggingface/datasets/issues/630 | Text dataset not working with large files | >>> Glad to see that it's faster now. What did you change exactly ?

I don't know, it just worked...? Sorry I couldn't be more helpful.

Setting with numpy array is a great idea! Thanks. | ```

Traceback (most recent call last):

File "examples/language-modeling/run_language_modeling.py", line 333, in <module>

main()

File "examples/language-modeling/run_language_modeling.py", line 262, in main

get_dataset(data_args, tokenizer=tokenizer, cache_dir=model_args.cache_dir) if training_args.do_t... | 35 | Text dataset not working with large files

```

Traceback (most recent call last):

File "examples/language-modeling/run_language_modeling.py", line 333, in <module>

main()

File "examples/language-modeling/run_language_modeling.py", line 262, in main

get_dataset(data_args, tokenizer=tokenizer, cache_dir... | [

-0.4925668538,

-0.2310243845,

-0.119864285,

0.2836015522,

0.4663561881,

-0.0735015199,

0.3053680658,

0.5961021781,

-0.1138257012,

0.0461648479,

-0.0624196492,

-0.030405255,

-0.1033420116,

0.3101792932,

-0.106213443,

-0.0389503799,

-0.2278844565,

0.1130641326,

-0.0946917832,

0.0... |

https://github.com/huggingface/datasets/issues/625 | dtype of tensors should be preserved | Indeed we convert tensors to list to be able to write in arrow format. Because of this conversion we lose the dtype information. We should add the dtype detection when we do type inference. However it would require a bit of refactoring since currently the conversion happens before the type inference..

And then for y... | After switching to `datasets` my model just broke. After a weekend of debugging, the issue was that my model could not handle the double that the Dataset provided, as it expected a float (but didn't give a warning, which seems a [PyTorch issue](https://discuss.pytorch.org/t/is-it-required-that-input-and-hidden-for-gru-... | 156 | dtype of tensors should be preserved

After switching to `datasets` my model just broke. After a weekend of debugging, the issue was that my model could not handle the double that the Dataset provided, as it expected a float (but didn't give a warning, which seems a [PyTorch issue](https://discuss.pytorch.org/t/is-it-... | [

-0.1134336665,

-0.221115008,

-0.0097108139,

0.2073050439,

0.5532283783,

0.1730132401,

0.5313699245,

0.1225807741,

0.1504824311,

-0.0665397719,

-0.0843991637,

0.2457148433,

-0.117551893,

-0.1751451641,

0.1026155949,

-0.2025275528,

0.2282531559,

-0.0652568489,

-0.1441735029,

-0.2... |

https://github.com/huggingface/datasets/issues/625 | dtype of tensors should be preserved | If the arrow format is basically lists, why is the intermediate step to numpy necessary? I am a bit confused about that part.

Thanks for your suggestion. as I have currently implemented this, I cast to torch.Tensor in my collate_fn to save disk space (so I do not have to save padded tensors to max_len but can pad up... | After switching to `datasets` my model just broke. After a weekend of debugging, the issue was that my model could not handle the double that the Dataset provided, as it expected a float (but didn't give a warning, which seems a [PyTorch issue](https://discuss.pytorch.org/t/is-it-required-that-input-and-hidden-for-gru-... | 89 | dtype of tensors should be preserved

After switching to `datasets` my model just broke. After a weekend of debugging, the issue was that my model could not handle the double that the Dataset provided, as it expected a float (but didn't give a warning, which seems a [PyTorch issue](https://discuss.pytorch.org/t/is-it-... | [

-0.1134336665,

-0.221115008,

-0.0097108139,

0.2073050439,

0.5532283783,

0.1730132401,

0.5313699245,

0.1225807741,

0.1504824311,

-0.0665397719,

-0.0843991637,

0.2457148433,

-0.117551893,

-0.1751451641,

0.1026155949,

-0.2025275528,

0.2282531559,

-0.0652568489,

-0.1441735029,

-0.2... |

https://github.com/huggingface/datasets/issues/625 | dtype of tensors should be preserved | I'm glad you managed to figure something out :)

Casting from arrow to numpy can be 100x faster than casting from arrow to list.

This is because arrow has an integration with numpy that allows it to instantiate numpy arrays with zero-copy from arrow.

On the other hand to create python lists it is slow since it has ... | After switching to `datasets` my model just broke. After a weekend of debugging, the issue was that my model could not handle the double that the Dataset provided, as it expected a float (but didn't give a warning, which seems a [PyTorch issue](https://discuss.pytorch.org/t/is-it-required-that-input-and-hidden-for-gru-... | 70 | dtype of tensors should be preserved

After switching to `datasets` my model just broke. After a weekend of debugging, the issue was that my model could not handle the double that the Dataset provided, as it expected a float (but didn't give a warning, which seems a [PyTorch issue](https://discuss.pytorch.org/t/is-it-... | [

-0.1134336665,

-0.221115008,

-0.0097108139,

0.2073050439,

0.5532283783,

0.1730132401,

0.5313699245,

0.1225807741,

0.1504824311,

-0.0665397719,

-0.0843991637,

0.2457148433,

-0.117551893,

-0.1751451641,

0.1026155949,

-0.2025275528,

0.2282531559,

-0.0652568489,

-0.1441735029,

-0.2... |

https://github.com/huggingface/datasets/issues/625 | dtype of tensors should be preserved | I encountered a simliar issue: `datasets` converted my float numpy array to `torch.float64` tensors, while many pytorch operations require `torch.float32` inputs and it's very troublesome.

I tried @lhoestq 's solution, but since it's mixed with the preprocess function, it's not very intuitive.

I just want to sh... | After switching to `datasets` my model just broke. After a weekend of debugging, the issue was that my model could not handle the double that the Dataset provided, as it expected a float (but didn't give a warning, which seems a [PyTorch issue](https://discuss.pytorch.org/t/is-it-required-that-input-and-hidden-for-gru-... | 96 | dtype of tensors should be preserved

After switching to `datasets` my model just broke. After a weekend of debugging, the issue was that my model could not handle the double that the Dataset provided, as it expected a float (but didn't give a warning, which seems a [PyTorch issue](https://discuss.pytorch.org/t/is-it-... | [

-0.1134336665,

-0.221115008,

-0.0097108139,

0.2073050439,

0.5532283783,

0.1730132401,

0.5313699245,

0.1225807741,

0.1504824311,

-0.0665397719,

-0.0843991637,

0.2457148433,

-0.117551893,

-0.1751451641,

0.1026155949,

-0.2025275528,

0.2282531559,

-0.0652568489,

-0.1441735029,

-0.2... |

https://github.com/huggingface/datasets/issues/625 | dtype of tensors should be preserved | Reopening since @bhavitvyamalik started looking into it !

Also I'm posting here a function that could be helpful to support preserving the dtype of tensors.

It's used to build a pyarrow array out of a numpy array and:

- it doesn't convert the numpy array to a python list

- it keeps the precision of the numpy ar... | After switching to `datasets` my model just broke. After a weekend of debugging, the issue was that my model could not handle the double that the Dataset provided, as it expected a float (but didn't give a warning, which seems a [PyTorch issue](https://discuss.pytorch.org/t/is-it-required-that-input-and-hidden-for-gru-... | 206 | dtype of tensors should be preserved

After switching to `datasets` my model just broke. After a weekend of debugging, the issue was that my model could not handle the double that the Dataset provided, as it expected a float (but didn't give a warning, which seems a [PyTorch issue](https://discuss.pytorch.org/t/is-it-... | [

-0.1134336665,

-0.221115008,

-0.0097108139,

0.2073050439,

0.5532283783,

0.1730132401,

0.5313699245,

0.1225807741,

0.1504824311,

-0.0665397719,

-0.0843991637,

0.2457148433,

-0.117551893,

-0.1751451641,

0.1026155949,

-0.2025275528,

0.2282531559,

-0.0652568489,

-0.1441735029,

-0.2... |

https://github.com/huggingface/datasets/issues/625 | dtype of tensors should be preserved | @lhoestq Have you thought about this further?

We have a use case where we're attempting to load data containing numpy arrays using the `datasets` library.

When using one of the "standard" methods (`[Value(...)]` or `Sequence()`) we see ~200 samples processed per second during the call to `_prepare_split`. This sl... | After switching to `datasets` my model just broke. After a weekend of debugging, the issue was that my model could not handle the double that the Dataset provided, as it expected a float (but didn't give a warning, which seems a [PyTorch issue](https://discuss.pytorch.org/t/is-it-required-that-input-and-hidden-for-gru-... | 239 | dtype of tensors should be preserved

After switching to `datasets` my model just broke. After a weekend of debugging, the issue was that my model could not handle the double that the Dataset provided, as it expected a float (but didn't give a warning, which seems a [PyTorch issue](https://discuss.pytorch.org/t/is-it-... | [

-0.1134336665,

-0.221115008,

-0.0097108139,

0.2073050439,

0.5532283783,

0.1730132401,

0.5313699245,

0.1225807741,

0.1504824311,

-0.0665397719,

-0.0843991637,

0.2457148433,

-0.117551893,

-0.1751451641,

0.1026155949,

-0.2025275528,

0.2282531559,

-0.0652568489,

-0.1441735029,

-0.2... |

https://github.com/huggingface/datasets/issues/625 | dtype of tensors should be preserved | Hi !

It would be awesome to achieve this speed for numpy arrays !

For now we have to use `encode_nested_example` to convert numpy arrays to python lists since pyarrow doesn't support multidimensional numpy arrays (only 1D).

Maybe let's start a new PR from your PR @bhavitvyamalik (idk why we didn't answer your PR... | After switching to `datasets` my model just broke. After a weekend of debugging, the issue was that my model could not handle the double that the Dataset provided, as it expected a float (but didn't give a warning, which seems a [PyTorch issue](https://discuss.pytorch.org/t/is-it-required-that-input-and-hidden-for-gru-... | 185 | dtype of tensors should be preserved

After switching to `datasets` my model just broke. After a weekend of debugging, the issue was that my model could not handle the double that the Dataset provided, as it expected a float (but didn't give a warning, which seems a [PyTorch issue](https://discuss.pytorch.org/t/is-it-... | [

-0.1134336665,

-0.221115008,

-0.0097108139,

0.2073050439,

0.5532283783,

0.1730132401,

0.5313699245,

0.1225807741,

0.1504824311,

-0.0665397719,

-0.0843991637,

0.2457148433,

-0.117551893,

-0.1751451641,

0.1026155949,

-0.2025275528,

0.2282531559,

-0.0652568489,

-0.1441735029,

-0.2... |

https://github.com/huggingface/datasets/issues/623 | Custom feature types in `load_dataset` from CSV | Currently `csv` doesn't support the `features` attribute (unlike `json`).

What you can do for now is cast the features using the in-place transform `cast_`

```python

from datasets import load_dataset

dataset = load_dataset('csv', data_files=file_dict, delimiter=';', column_names=['text', 'label'])

dataset.cast... | I am trying to load a local file with the `load_dataset` function and I want to predefine the feature types with the `features` argument. However, the types are always the same independent of the value of `features`.

I am working with the local files from the emotion dataset. To get the data you can use the followi... | 38 | Custom feature types in `load_dataset` from CSV

I am trying to load a local file with the `load_dataset` function and I want to predefine the feature types with the `features` argument. However, the types are always the same independent of the value of `features`.

I am working with the local files from the emotio... | [

0.0802028924,

-0.2782892585,

-0.0531790853,

0.3509229124,

0.3172289729,

-0.1943105757,

0.5701336265,

0.1113842726,

0.446125567,

0.0253306851,

0.0947441235,

0.3161779642,

-0.0919102132,

0.3901152909,

-0.0581882037,

0.0267203413,

-0.1612748355,

0.3348048329,

-0.0091214385,

-0.349... |

https://github.com/huggingface/datasets/issues/623 | Custom feature types in `load_dataset` from CSV | Hi @lhoestq we've tried out your suggestion but are now running into the following error:

```

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

<ipython-input-163-81ffd5ac18c9> in <module>

----> 1 dataset.cast_(... | I am trying to load a local file with the `load_dataset` function and I want to predefine the feature types with the `features` argument. However, the types are always the same independent of the value of `features`.

I am working with the local files from the emotion dataset. To get the data you can use the followi... | 168 | Custom feature types in `load_dataset` from CSV

I am trying to load a local file with the `load_dataset` function and I want to predefine the feature types with the `features` argument. However, the types are always the same independent of the value of `features`.

I am working with the local files from the emotio... | [

0.0802028924,

-0.2782892585,

-0.0531790853,

0.3509229124,

0.3172289729,

-0.1943105757,

0.5701336265,

0.1113842726,

0.446125567,

0.0253306851,

0.0947441235,

0.3161779642,

-0.0919102132,

0.3901152909,

-0.0581882037,

0.0267203413,

-0.1612748355,

0.3348048329,

-0.0091214385,

-0.349... |

https://github.com/huggingface/datasets/issues/623 | Custom feature types in `load_dataset` from CSV | In general, I don't think there is any hard reason we don't allow to use `features` in the csv script, right @lhoestq?

Should I add it? | I am trying to load a local file with the `load_dataset` function and I want to predefine the feature types with the `features` argument. However, the types are always the same independent of the value of `features`.

I am working with the local files from the emotion dataset. To get the data you can use the followi... | 26 | Custom feature types in `load_dataset` from CSV

I am trying to load a local file with the `load_dataset` function and I want to predefine the feature types with the `features` argument. However, the types are always the same independent of the value of `features`.

I am working with the local files from the emotio... | [

0.0802028924,

-0.2782892585,

-0.0531790853,

0.3509229124,

0.3172289729,

-0.1943105757,

0.5701336265,

0.1113842726,

0.446125567,

0.0253306851,

0.0947441235,

0.3161779642,

-0.0919102132,

0.3901152909,

-0.0581882037,

0.0267203413,

-0.1612748355,

0.3348048329,

-0.0091214385,

-0.349... |

https://github.com/huggingface/datasets/issues/623 | Custom feature types in `load_dataset` from CSV | > In general, I don't think there is any hard reason we don't allow to use `features` in the csv script, right @lhoestq?

>

> Should I add it?

Sure let's add it. Setting the convert options should do the job

> Hi @lhoestq we've tried out your suggestion but are now running into the following error:

>

> ```

... | I am trying to load a local file with the `load_dataset` function and I want to predefine the feature types with the `features` argument. However, the types are always the same independent of the value of `features`.

I am working with the local files from the emotion dataset. To get the data you can use the followi... | 136 | Custom feature types in `load_dataset` from CSV

I am trying to load a local file with the `load_dataset` function and I want to predefine the feature types with the `features` argument. However, the types are always the same independent of the value of `features`.

I am working with the local files from the emotio... | [

0.0802028924,

-0.2782892585,

-0.0531790853,

0.3509229124,

0.3172289729,

-0.1943105757,

0.5701336265,

0.1113842726,

0.446125567,

0.0253306851,

0.0947441235,

0.3161779642,

-0.0919102132,

0.3901152909,

-0.0581882037,

0.0267203413,

-0.1612748355,

0.3348048329,

-0.0091214385,

-0.349... |

https://github.com/huggingface/datasets/issues/623 | Custom feature types in `load_dataset` from CSV | PR is open for the `ValueError: Target schema's field names are not matching the table's field names` error.

I'm adding the features parameter to csv | I am trying to load a local file with the `load_dataset` function and I want to predefine the feature types with the `features` argument. However, the types are always the same independent of the value of `features`.

I am working with the local files from the emotion dataset. To get the data you can use the followi... | 25 | Custom feature types in `load_dataset` from CSV

I am trying to load a local file with the `load_dataset` function and I want to predefine the feature types with the `features` argument. However, the types are always the same independent of the value of `features`.

I am working with the local files from the emotio... | [

0.0802028924,

-0.2782892585,

-0.0531790853,

0.3509229124,

0.3172289729,

-0.1943105757,

0.5701336265,

0.1113842726,

0.446125567,

0.0253306851,

0.0947441235,

0.3161779642,

-0.0919102132,

0.3901152909,

-0.0581882037,

0.0267203413,

-0.1612748355,

0.3348048329,

-0.0091214385,

-0.349... |

https://github.com/huggingface/datasets/issues/622 | load_dataset for text files not working | @thomwolf Sure. I'll try downgrading to 3.7 now even though Arrow say they support >=3.5.

Linux (Ubuntu 18.04) - Python 3.8

======================

Package - Version

---------------------

certifi 2020.6.20

chardet 3.0.4

click 7.1.2

datasets 1.0.1

di... | Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loading_datasets.html#json-files) shows that ... | 194 | load_dataset for text files not working

Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loa... | [

-0.2746653557,

-0.4020572305,

0.0175604671,

0.3872526288,

0.2696422935,

-0.0386611708,

0.3188883066,

-0.0543564558,

0.4263593853,

-0.0580489412,

0.0659723133,

0.1455249637,

-0.155762881,

0.2742005587,

0.0635563657,

-0.0350760669,

0.1571834832,

-0.0138411364,

-0.2914434075,

0.04... |

https://github.com/huggingface/datasets/issues/622 | load_dataset for text files not working | Downgrading to 3.7 does not help. Here is a dummy text file:

```text

Verzekering weigert vaker te betalen

Bedrijven van verzekeringen erkennen steeds minder arbeidsongevallen .

In 2012 weigerden de bedrijven te betalen voor 21.055 ongevallen op het werk .

Dat is 11,8 % van alle ongevallen op het werk .

Nog nooi... | Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loading_datasets.html#json-files) shows that ... | 120 | load_dataset for text files not working

Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loa... | [

-0.2746653557,

-0.4020572305,

0.0175604671,

0.3872526288,

0.2696422935,

-0.0386611708,

0.3188883066,

-0.0543564558,

0.4263593853,

-0.0580489412,

0.0659723133,

0.1455249637,

-0.155762881,

0.2742005587,

0.0635563657,

-0.0350760669,

0.1571834832,

-0.0138411364,

-0.2914434075,

0.04... |

https://github.com/huggingface/datasets/issues/622 | load_dataset for text files not working | @banunitte Please do not post screenshots in the future but copy-paste your code and the errors. That allows others to copy-and-paste your code and test it. You may also want to provide the Python version that you are using. | Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loading_datasets.html#json-files) shows that ... | 39 | load_dataset for text files not working

Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loa... | [

-0.2746653557,

-0.4020572305,

0.0175604671,

0.3872526288,

0.2696422935,

-0.0386611708,

0.3188883066,

-0.0543564558,

0.4263593853,

-0.0580489412,

0.0659723133,

0.1455249637,

-0.155762881,

0.2742005587,

0.0635563657,

-0.0350760669,

0.1571834832,

-0.0138411364,

-0.2914434075,

0.04... |

https://github.com/huggingface/datasets/issues/622 | load_dataset for text files not working | I have the same problem on Linux of the script crashing with a CSV error. This may be caused by 'CRLF', when changed 'CRLF' to 'LF', the problem solved. | Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loading_datasets.html#json-files) shows that ... | 29 | load_dataset for text files not working

Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loa... | [

-0.2746653557,

-0.4020572305,

0.0175604671,

0.3872526288,

0.2696422935,

-0.0386611708,

0.3188883066,

-0.0543564558,

0.4263593853,

-0.0580489412,

0.0659723133,

0.1455249637,

-0.155762881,

0.2742005587,

0.0635563657,

-0.0350760669,

0.1571834832,

-0.0138411364,

-0.2914434075,

0.04... |

https://github.com/huggingface/datasets/issues/622 | load_dataset for text files not working | I pushed a fix for `pyarrow.lib.ArrowInvalid: CSV parse error`. Let me know if you still have this issue.

Not sure about the windows one yet | Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loading_datasets.html#json-files) shows that ... | 25 | load_dataset for text files not working

Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loa... | [

-0.2746653557,

-0.4020572305,

0.0175604671,

0.3872526288,

0.2696422935,

-0.0386611708,

0.3188883066,

-0.0543564558,

0.4263593853,

-0.0580489412,

0.0659723133,

0.1455249637,

-0.155762881,

0.2742005587,

0.0635563657,

-0.0350760669,

0.1571834832,

-0.0138411364,

-0.2914434075,

0.04... |

https://github.com/huggingface/datasets/issues/622 | load_dataset for text files not working | To complete what @lhoestq is saying, I think that to use the new version of the `text` processing script (which is on master right now) you need to either specify the version of the script to be the `master` one or to install the lib from source (in which case it uses the `master` version of the script by default):

``... | Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loading_datasets.html#json-files) shows that ... | 107 | load_dataset for text files not working

Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loa... | [

-0.2746653557,

-0.4020572305,

0.0175604671,

0.3872526288,

0.2696422935,

-0.0386611708,

0.3188883066,

-0.0543564558,

0.4263593853,

-0.0580489412,

0.0659723133,

0.1455249637,

-0.155762881,

0.2742005587,

0.0635563657,

-0.0350760669,

0.1571834832,

-0.0138411364,

-0.2914434075,

0.04... |

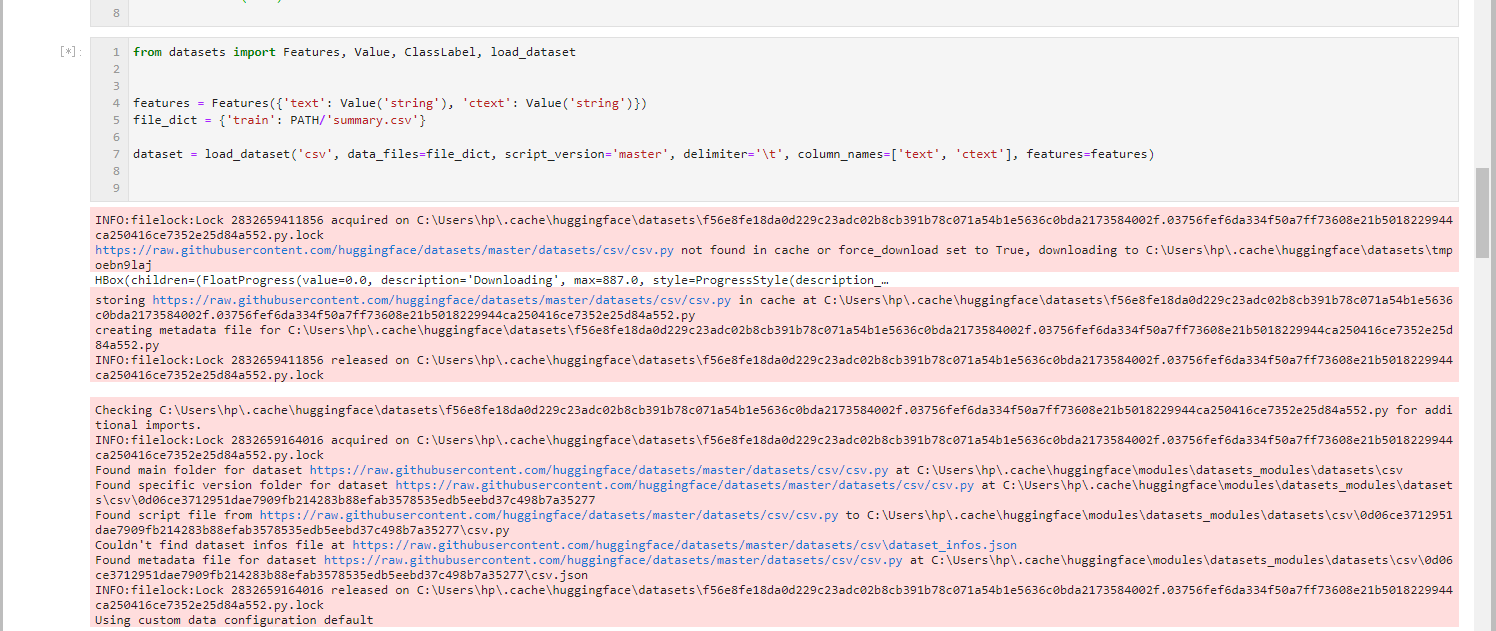

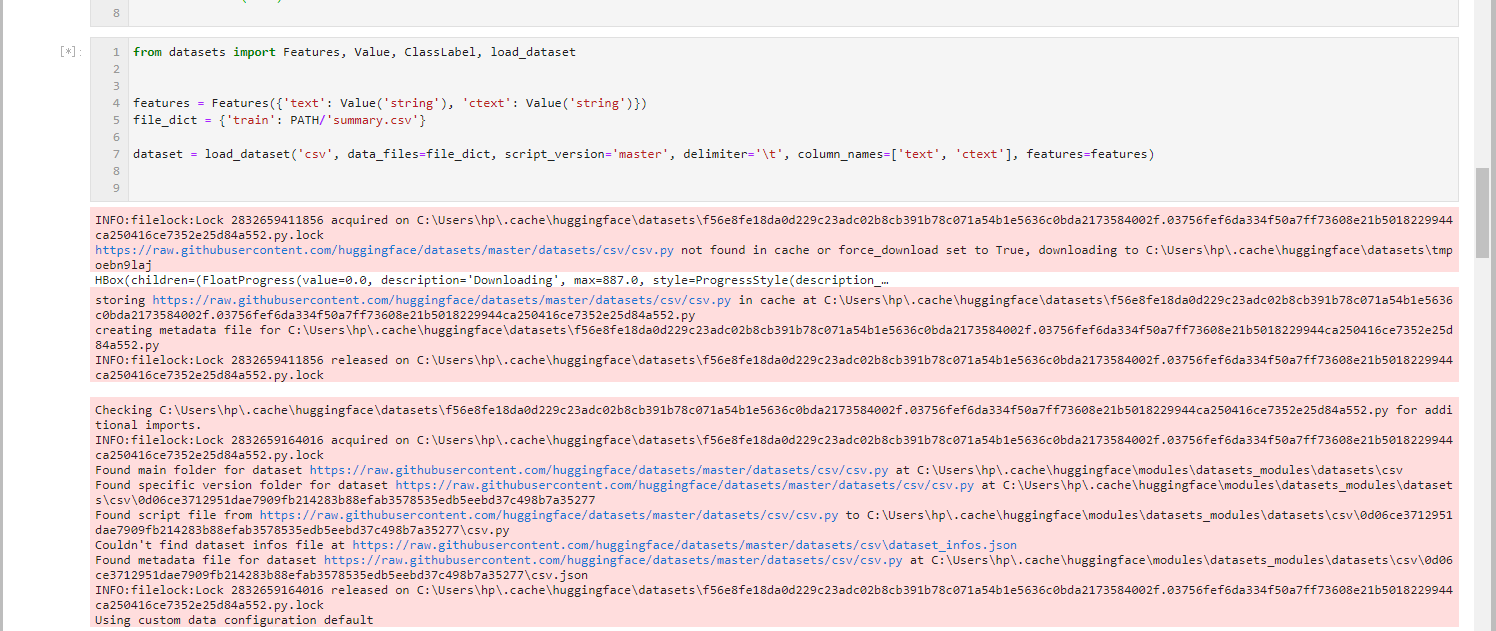

https://github.com/huggingface/datasets/issues/622 | load_dataset for text files not working |

win10, py3.6

```

from datasets import Features, Value, ClassLabel, load_dataset

features = Features({'text': Value('string'), 'ctext': Value('string')})

file_dict = {'train': PATH/'summary.csv'}

... | Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loading_datasets.html#json-files) shows that ... | 31 | load_dataset for text files not working

Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loa... | [

-0.2746653557,

-0.4020572305,

0.0175604671,

0.3872526288,

0.2696422935,

-0.0386611708,

0.3188883066,

-0.0543564558,

0.4263593853,

-0.0580489412,

0.0659723133,

0.1455249637,

-0.155762881,

0.2742005587,

0.0635563657,

-0.0350760669,

0.1571834832,

-0.0138411364,

-0.2914434075,

0.04... |

https://github.com/huggingface/datasets/issues/622 | load_dataset for text files not working | ```python

Traceback` (most recent call last):

File "main.py", line 281, in <module>

main()

File "main.py", line 190, in main

train_data, test_data = data_factory(

File "main.py", line 129, in data_factory

train_data = load_dataset('text',

File "/home/me/Downloads/datasets/src/datasets/load.... | Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loading_datasets.html#json-files) shows that ... | 135 | load_dataset for text files not working

Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loa... | [

-0.2746653557,

-0.4020572305,

0.0175604671,

0.3872526288,

0.2696422935,

-0.0386611708,

0.3188883066,

-0.0543564558,

0.4263593853,

-0.0580489412,

0.0659723133,

0.1455249637,

-0.155762881,

0.2742005587,

0.0635563657,

-0.0350760669,

0.1571834832,

-0.0138411364,

-0.2914434075,

0.04... |

https://github.com/huggingface/datasets/issues/622 | load_dataset for text files not working | >

> win10, py3.6

>

> ```

> from datasets import Features, Value, ClassLabel, load_dataset

>

>

> features = Features({'text': Value('string'), 'ctext': Value('string')})

> file_dict = {'train': PATH/... | Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loading_datasets.html#json-files) shows that ... | 184 | load_dataset for text files not working

Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loa... | [

-0.2746653557,

-0.4020572305,

0.0175604671,

0.3872526288,

0.2696422935,

-0.0386611708,

0.3188883066,

-0.0543564558,

0.4263593853,

-0.0580489412,

0.0659723133,

0.1455249637,

-0.155762881,

0.2742005587,

0.0635563657,

-0.0350760669,

0.1571834832,

-0.0138411364,

-0.2914434075,

0.04... |

https://github.com/huggingface/datasets/issues/622 | load_dataset for text files not working | > To complete what @lhoestq is saying, I think that to use the new version of the `text` processing script (which is on master right now) you need to either specify the version of the script to be the `master` one or to install the lib from source (in which case it uses the `master` version of the script by default):

... | Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loading_datasets.html#json-files) shows that ... | 206 | load_dataset for text files not working

Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loa... | [

-0.2746653557,

-0.4020572305,

0.0175604671,

0.3872526288,

0.2696422935,

-0.0386611708,

0.3188883066,

-0.0543564558,

0.4263593853,

-0.0580489412,

0.0659723133,

0.1455249637,

-0.155762881,

0.2742005587,

0.0635563657,

-0.0350760669,

0.1571834832,

-0.0138411364,

-0.2914434075,

0.04... |

https://github.com/huggingface/datasets/issues/622 | load_dataset for text files not working | Hi @raruidol

To fix the RAM issue you'll need to shard your text files into smaller files (see https://github.com/huggingface/datasets/issues/610#issuecomment-691672919 for example)

I'm not sure why you're having the csv error on linux.

Do you think you could to to reproduce it on google colab for example ?

Or s... | Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loading_datasets.html#json-files) shows that ... | 59 | load_dataset for text files not working

Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loa... | [

-0.2746653557,

-0.4020572305,

0.0175604671,

0.3872526288,

0.2696422935,

-0.0386611708,

0.3188883066,

-0.0543564558,

0.4263593853,

-0.0580489412,

0.0659723133,

0.1455249637,

-0.155762881,

0.2742005587,

0.0635563657,

-0.0350760669,

0.1571834832,

-0.0138411364,

-0.2914434075,

0.04... |

https://github.com/huggingface/datasets/issues/622 | load_dataset for text files not working | @lhoestq

The crash message shows up when loading the dataset:

```

print('Loading corpus...')

files = glob.glob('corpora/shards/*')

-> dataset = load_dataset('text', script_version='master', data_files=files)

print('Corpus loaded.')

```

And this is the exact message:

```

Traceback (most recent call last)... | Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loading_datasets.html#json-files) shows that ... | 207 | load_dataset for text files not working

Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loa... | [

-0.2746653557,

-0.4020572305,

0.0175604671,

0.3872526288,

0.2696422935,

-0.0386611708,

0.3188883066,

-0.0543564558,

0.4263593853,

-0.0580489412,

0.0659723133,

0.1455249637,

-0.155762881,

0.2742005587,

0.0635563657,

-0.0350760669,

0.1571834832,

-0.0138411364,

-0.2914434075,

0.04... |

https://github.com/huggingface/datasets/issues/622 | load_dataset for text files not working | I tested on google colab which is also linux using this code:

- first download an arbitrary text file

```bash

wget https://raw.githubusercontent.com/abisee/cnn-dailymail/master/url_lists/all_train.txt

```

- then run

```python

from datasets import load_dataset

d = load_dataset("text", data_files="all_train.t... | Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loading_datasets.html#json-files) shows that ... | 156 | load_dataset for text files not working

Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loa... | [

-0.2746653557,

-0.4020572305,

0.0175604671,

0.3872526288,

0.2696422935,

-0.0386611708,

0.3188883066,

-0.0543564558,

0.4263593853,

-0.0580489412,

0.0659723133,

0.1455249637,

-0.155762881,

0.2742005587,

0.0635563657,

-0.0350760669,

0.1571834832,

-0.0138411364,

-0.2914434075,

0.04... |

https://github.com/huggingface/datasets/issues/622 | load_dataset for text files not working | Update: also tested the above code in a docker container from [jupyter/minimal-notebook](https://hub.docker.com/r/jupyter/minimal-notebook/) (based on ubuntu) and still not able to reproduce | Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loading_datasets.html#json-files) shows that ... | 21 | load_dataset for text files not working

Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loa... | [

-0.2746653557,

-0.4020572305,

0.0175604671,

0.3872526288,

0.2696422935,

-0.0386611708,

0.3188883066,

-0.0543564558,

0.4263593853,

-0.0580489412,

0.0659723133,

0.1455249637,

-0.155762881,

0.2742005587,

0.0635563657,

-0.0350760669,

0.1571834832,

-0.0138411364,

-0.2914434075,

0.04... |

https://github.com/huggingface/datasets/issues/622 | load_dataset for text files not working | It looks like with your text input file works without any problem. I have been doing some experiments this morning with my input files and I'm almost certain that the crash is caused by some unexpected pattern in the files. However, I've not been able to spot the main cause of it. What I find strange is that this same ... | Trying the following snippet, I get different problems on Linux and Windows.

```python

dataset = load_dataset("text", data_files="data.txt")

# or

dataset = load_dataset("text", data_files=["data.txt"])

```

(ps [This example](https://huggingface.co/docs/datasets/loading_datasets.html#json-files) shows that ... | 92 | load_dataset for text files not working

Trying the following snippet, I get different problems on Linux and Windows.

```python