url stringlengths 58 61 | repository_url stringclasses 1 value | labels_url stringlengths 72 75 | comments_url stringlengths 67 70 | events_url stringlengths 65 68 | html_url stringlengths 48 51 | id int64 600M 3.09B | node_id stringlengths 18 24 | number int64 2 7.59k | title stringlengths 1 290 | user dict | labels listlengths 0 4 | state stringclasses 1 value | locked bool 1 class | assignee dict | assignees listlengths 0 4 | milestone dict | comments listlengths 0 30 | created_at timestamp[ns, tz=UTC]date 2020-04-14 18:18:51 2025-05-27 13:46:05 | updated_at timestamp[ns, tz=UTC]date 2020-04-29 09:23:05 2025-06-09 22:00:16 | closed_at timestamp[ns, tz=UTC]date 2020-04-29 09:23:05 2025-06-06 16:12:36 | author_association stringclasses 4 values | type float64 | active_lock_reason float64 | sub_issues_summary dict | body stringlengths 0 228k ⌀ | closed_by dict | reactions dict | timeline_url stringlengths 67 70 | performed_via_github_app float64 | state_reason stringclasses 3 values | draft float64 | pull_request null | time_to_close_hours float64 0.01 28.8k | __index_level_0__ int64 18 7.53k |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

https://api.github.com/repos/huggingface/datasets/issues/5098 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5098/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5098/comments | https://api.github.com/repos/huggingface/datasets/issues/5098/events | https://github.com/huggingface/datasets/issues/5098 | 1,404,058,518 | I_kwDODunzps5TsDuW | 5,098 | Classes label error when loading symbolic links using imagefolder | {

"avatar_url": "https://avatars.githubusercontent.com/u/49552732?v=4",

"events_url": "https://api.github.com/users/horizon86/events{/privacy}",

"followers_url": "https://api.github.com/users/horizon86/followers",

"following_url": "https://api.github.com/users/horizon86/following{/other_user}",

"gists_url": "https://api.github.com/users/horizon86/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/horizon86",

"id": 49552732,

"login": "horizon86",

"node_id": "MDQ6VXNlcjQ5NTUyNzMy",

"organizations_url": "https://api.github.com/users/horizon86/orgs",

"received_events_url": "https://api.github.com/users/horizon86/received_events",

"repos_url": "https://api.github.com/users/horizon86/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/horizon86/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/horizon86/subscriptions",

"type": "User",

"url": "https://api.github.com/users/horizon86",

"user_view_type": "public"

} | [

{

"color": "a2eeef",

"default": true,

"description": "New feature or request",

"id": 1935892871,

"name": "enhancement",

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement"

},

{

"color": "7057ff",

"default": true... | closed | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/9295277?v=4",

"events_url": "https://api.github.com/users/riccardobucco/events{/privacy}",

"followers_url": "https://api.github.com/users/riccardobucco/followers",

"following_url": "https://api.github.com/users/riccardobucco/following{/other_user}",

"gists_url": "https://api.github.com/users/riccardobucco/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/riccardobucco",

"id": 9295277,

"login": "riccardobucco",

"node_id": "MDQ6VXNlcjkyOTUyNzc=",

"organizations_url": "https://api.github.com/users/riccardobucco/orgs",

"received_events_url": "https://api.github.com/users/riccardobucco/received_events",

"repos_url": "https://api.github.com/users/riccardobucco/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/riccardobucco/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/riccardobucco/subscriptions",

"type": "User",

"url": "https://api.github.com/users/riccardobucco",

"user_view_type": "public"

} | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/9295277?v=4",

"events_url": "https://api.github.com/users/riccardobucco/events{/privacy}",

"followers_url": "https://api.github.com/users/riccardobucco/followers",

"following_url": "https://api.github.com/users/riccardobucco/following{/other_u... | null | [

"It can be solved temporarily by remove `resolve` in \r\nhttps://github.com/huggingface/datasets/blob/bef23be3d9543b1ca2da87ab2f05070201044ddc/src/datasets/data_files.py#L278",

"Hi, thanks for reporting and suggesting a fix! We still need to account for `.`/`..` in the file path, so a more robust fix would be `P... | 2022-10-11T06:10:58Z | 2022-11-14T14:40:20Z | 2022-11-14T14:40:20Z | NONE | null | null | {

"completed": 0,

"percent_completed": 0,

"total": 0

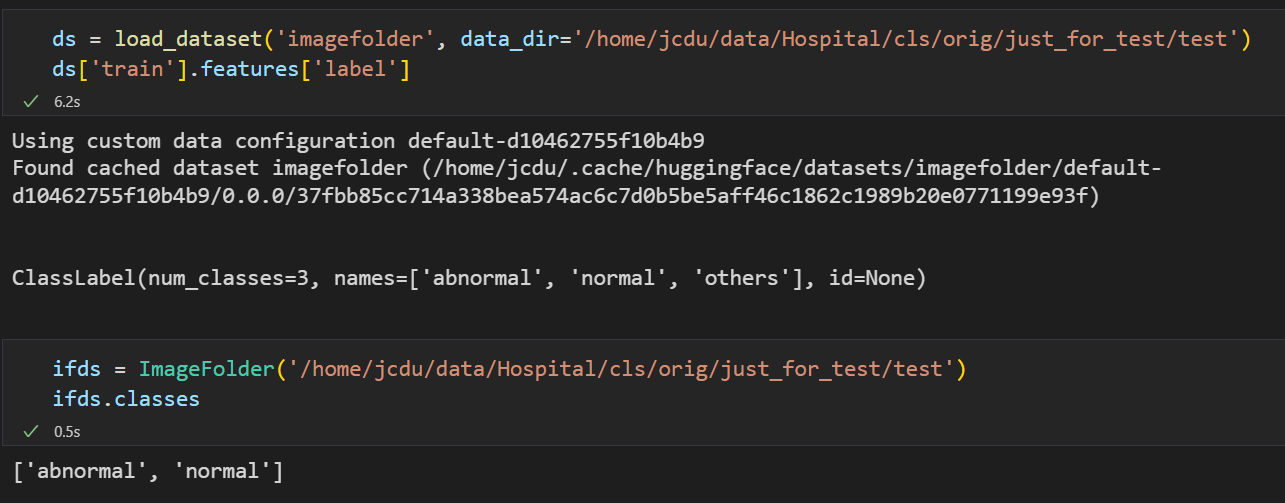

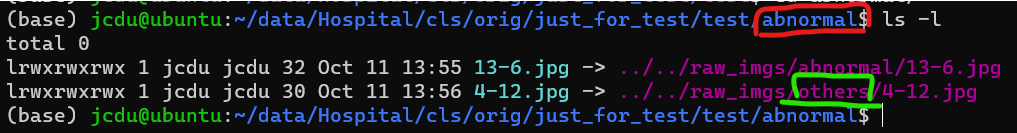

} | **Is your feature request related to a problem? Please describe.**

Like this: #4015

When there are **symbolic links** to pictures in the data folder, the parent folder name of the **real file** will be used as the class name instead of the parent folder of the symbolic link itself. Can you give an option to decide whether to enable symbolic link tracking?

This is inconsistent with the `torchvision.datasets.ImageFolder` behavior.

For example:

It use `others` in green circle as class label but not `abnormal`, I wish `load_dataset` not use the real file parent as label.

**Describe the solution you'd like**

A clear and concise description of what you want to happen.

**Describe alternatives you've considered**

A clear and concise description of any alternative solutions or features you've considered.

**Additional context**

Add any other context about the feature request here.

| {

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/mariosasko",

"id": 47462742,

"login": "mariosasko",

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"type": "User",

"url": "https://api.github.com/users/mariosasko",

"user_view_type": "public"

} | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5098/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5098/timeline | null | completed | null | null | 824.489444 | 2,518 |

https://api.github.com/repos/huggingface/datasets/issues/5097 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5097/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5097/comments | https://api.github.com/repos/huggingface/datasets/issues/5097/events | https://github.com/huggingface/datasets/issues/5097 | 1,403,679,353 | I_kwDODunzps5TqnJ5 | 5,097 | Fatal error with pyarrow/libarrow.so | {

"avatar_url": "https://avatars.githubusercontent.com/u/11340846?v=4",

"events_url": "https://api.github.com/users/catalys1/events{/privacy}",

"followers_url": "https://api.github.com/users/catalys1/followers",

"following_url": "https://api.github.com/users/catalys1/following{/other_user}",

"gists_url": "https://api.github.com/users/catalys1/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/catalys1",

"id": 11340846,

"login": "catalys1",

"node_id": "MDQ6VXNlcjExMzQwODQ2",

"organizations_url": "https://api.github.com/users/catalys1/orgs",

"received_events_url": "https://api.github.com/users/catalys1/received_events",

"repos_url": "https://api.github.com/users/catalys1/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/catalys1/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/catalys1/subscriptions",

"type": "User",

"url": "https://api.github.com/users/catalys1",

"user_view_type": "public"

} | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] | closed | false | null | [] | null | [

"Thanks for reporting, @catalys1.\r\n\r\nThis seems a duplicate of:\r\n- #3310 \r\n\r\nThe source of the problem is in PyArrow:\r\n- [ARROW-15141: [C++] Fatal error condition occurred in aws_thread_launch](https://issues.apache.org/jira/browse/ARROW-15141)\r\n- [ARROW-17501: [C++] Fatal error condition occurred in ... | 2022-10-10T20:29:04Z | 2022-10-11T06:56:01Z | 2022-10-11T06:56:00Z | NONE | null | null | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | ## Describe the bug

When using datasets, at the very end of my jobs the program crashes (see trace below).

It doesn't seem to affect anything, as it appears to happen as the program is closing down. Just importing `datasets` is enough to cause the error.

## Steps to reproduce the bug

This is sufficient to reproduce the problem:

```bash

python -c "import datasets"

```

## Expected results

Program should run to completion without an error.

## Actual results

```bash

Fatal error condition occurred in /opt/vcpkg/buildtrees/aws-c-io/src/9e6648842a-364b708815.clean/source/event_loop.c:72: aws_thread_launch(&cleanup_thread, s_event_loop_destroy_async_thread_fn, el_group, &thread_options) == AWS_OP_SUCCESS

Exiting Application

################################################################################

Stack trace:

################################################################################

/u/user/miniconda3/envs/env/lib/python3.10/site-packages/pyarrow/libarrow.so.900(+0x200af06) [0x150dff547f06]

/u/user/miniconda3/envs/env/lib/python3.10/site-packages/pyarrow/libarrow.so.900(+0x20028e5) [0x150dff53f8e5]

/u/user/miniconda3/envs/env/lib/python3.10/site-packages/pyarrow/libarrow.so.900(+0x1f27e09) [0x150dff464e09]

/u/user/miniconda3/envs/env/lib/python3.10/site-packages/pyarrow/libarrow.so.900(+0x200ba3d) [0x150dff548a3d]

/u/user/miniconda3/envs/env/lib/python3.10/site-packages/pyarrow/libarrow.so.900(+0x1f25948) [0x150dff462948]

/u/user/miniconda3/envs/env/lib/python3.10/site-packages/pyarrow/libarrow.so.900(+0x200ba3d) [0x150dff548a3d]

/u/user/miniconda3/envs/env/lib/python3.10/site-packages/pyarrow/libarrow.so.900(+0x1ee0b46) [0x150dff41db46]

/u/user/miniconda3/envs/env/lib/python3.10/site-packages/pyarrow/libarrow.so.900(+0x194546a) [0x150dfee8246a]

/lib64/libc.so.6(+0x39b0c) [0x150e15eadb0c]

/lib64/libc.so.6(on_exit+0) [0x150e15eadc40]

/u/user/miniconda3/envs/env/bin/python(+0x28db18) [0x560ae370eb18]

/u/user/miniconda3/envs/env/bin/python(+0x28db4b) [0x560ae370eb4b]

/u/user/miniconda3/envs/env/bin/python(+0x28db90) [0x560ae370eb90]

/u/user/miniconda3/envs/env/bin/python(_PyRun_SimpleFileObject+0x1e6) [0x560ae37123e6]

/u/user/miniconda3/envs/env/bin/python(_PyRun_AnyFileObject+0x44) [0x560ae37124c4]

/u/user/miniconda3/envs/env/bin/python(Py_RunMain+0x35d) [0x560ae37135bd]

/u/user/miniconda3/envs/env/bin/python(Py_BytesMain+0x39) [0x560ae37137d9]

/lib64/libc.so.6(__libc_start_main+0xf3) [0x150e15e97493]

/u/user/miniconda3/envs/env/bin/python(+0x2125d4) [0x560ae36935d4]

Aborted (core dumped)

```

## Environment info

- `datasets` version: 2.5.1

- Platform: Linux-4.18.0-348.23.1.el8_5.x86_64-x86_64-with-glibc2.28

- Python version: 3.10.4

- PyArrow version: 9.0.0

- Pandas version: 1.4.3

| {

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova",

"user_view_type": "public"

} | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5097/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5097/timeline | null | completed | null | null | 10.448889 | 2,519 |

https://api.github.com/repos/huggingface/datasets/issues/5096 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5096/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5096/comments | https://api.github.com/repos/huggingface/datasets/issues/5096/events | https://github.com/huggingface/datasets/issues/5096 | 1,403,379,816 | I_kwDODunzps5TpeBo | 5,096 | Transfer some canonical datasets under an organization namespace | {

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova",

"user_view_type": "public"

} | [

{

"color": "0e8a16",

"default": false,

"description": "Contribution to a dataset script",

"id": 4564477500,

"name": "dataset contribution",

"node_id": "LA_kwDODunzps8AAAABEBBmPA",

"url": "https://api.github.com/repos/huggingface/datasets/labels/dataset%20contribution"

}

] | closed | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova",

"user_view_type": "public"

} | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/o... | null | [

"The transfer of the dummy dataset to the dummy org works as expected:\r\n```python\r\nIn [1]: from datasets import load_dataset; ds = load_dataset(\"dummy_canonical_dataset\", download_mode=\"force_redownload\"); ds\r\nDownloading builder script: 100%|███████████████████████████████████████████████████████████████... | 2022-10-10T15:44:31Z | 2024-06-24T06:06:28Z | 2024-06-24T06:02:45Z | MEMBER | null | null | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | As discussed during our @huggingface/datasets meeting, we are planning to move some "canonical" dataset scripts under their corresponding organization namespace (if this does not exist).

On the contrary, if the dataset already exists under the organization namespace, we are deprecating the canonical one (and eventually delete it).

First, we should test it using a dummy dataset/organization.

TODO:

- [x] Test with a dummy dataset

- [x] Create dummy canonical dataset: https://huggingface.co/datasets/dummy_canonical_dataset

- [x] Create dummy organization: https://huggingface.co/dummy-canonical-org

- [x] Transfer dummy canonical dataset to dummy organization

- [ ] Transfer datasets

- [x] babi_qa => facebook

- [x] blbooks => TheBritishLibrary/blbooks

- [x] blbooksgenre => TheBritishLibrary/blbooksgenre

- [x] common_gen => allenai

- [x] commonsense_qa => tau

- [x] competition_math => hendrycks/competition_math

- [x] cord19 => allenai

- [x] emotion => dair-ai

- [ ] gem => GEM

- [x] hellaswag => Rowan

- [x] hendrycks_test => cais/mmlu

- [x] indonlu => indonlp

- [ ] multilingual_librispeech => facebook

- It already exists "facebook/multilingual_librispeech"

- [ ] oscar => oscar-corpus

- [x] peer_read => allenai

- [x] qasper => allenai

- [x] reddit => webis/tldr-17

- [x] russian_super_glue => russiannlp

- [x] rvl_cdip => aharley

- [x] s2orc => allenai

- [x] scicite => allenai

- [x] scifact => allenai

- [x] scitldr => allenai

- [x] swiss_judgment_prediction => rcds

- [x] the_pile => EleutherAI

- [ ] wmt14, wmt15, wmt16, wmt17, wmt18, wmt19,... => wmt

- [ ] Deprecate (and eventually remove) datasets that cannot be transferred because they already exist

- [x] banking77 => PolyAI

- [x] common_voice => mozilla-foundation

- [x] german_legal_entity_recognition => elenanereiss

- ...

EDIT: the list above is continuously being updated | {

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova",

"user_view_type": "public"

} | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 2,

"total_count": 2,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5096/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5096/timeline | null | completed | null | null | 14,942.303889 | 2,520 |

https://api.github.com/repos/huggingface/datasets/issues/5094 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5094/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5094/comments | https://api.github.com/repos/huggingface/datasets/issues/5094/events | https://github.com/huggingface/datasets/issues/5094 | 1,403,214,950 | I_kwDODunzps5To1xm | 5,094 | Multiprocessing with `Dataset.map` and `PyTorch` results in deadlock | {

"avatar_url": "https://avatars.githubusercontent.com/u/36822895?v=4",

"events_url": "https://api.github.com/users/RR-28023/events{/privacy}",

"followers_url": "https://api.github.com/users/RR-28023/followers",

"following_url": "https://api.github.com/users/RR-28023/following{/other_user}",

"gists_url": "https://api.github.com/users/RR-28023/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/RR-28023",

"id": 36822895,

"login": "RR-28023",

"node_id": "MDQ6VXNlcjM2ODIyODk1",

"organizations_url": "https://api.github.com/users/RR-28023/orgs",

"received_events_url": "https://api.github.com/users/RR-28023/received_events",

"repos_url": "https://api.github.com/users/RR-28023/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/RR-28023/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/RR-28023/subscriptions",

"type": "User",

"url": "https://api.github.com/users/RR-28023",

"user_view_type": "public"

} | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] | closed | false | null | [] | null | [

"Hi ! Could it be an Out of Memory issue that could have killed one of the processes ? can you check your memory ?",

"Hi! I don't think it is a memory issue. I'm monitoring the main and spawn python processes and threads with `htop` and the memory does not peak. Besides, the example I've posted above should not b... | 2022-10-10T13:50:56Z | 2023-07-24T15:29:13Z | 2023-07-24T15:29:13Z | NONE | null | null | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | ## Describe the bug

There seems to be an issue with using multiprocessing with `datasets.Dataset.map` (i.e. setting `num_proc` to a value greater than one) combined with a function that uses `torch` under the hood. The subprocesses that `datasets.Dataset.map` spawns [a this step](https://github.com/huggingface/datasets/blob/1b935dab9d2f171a8c6294269421fe967eb55e34/src/datasets/arrow_dataset.py#L2663) go into wait mode forever.

## Steps to reproduce the bug

The below code goes into deadlock when `NUMBER_OF_PROCESSES` is greater than one.

```python

NUMBER_OF_PROCESSES = 2

from transformers import AutoTokenizer, AutoModel

from datasets import load_dataset

dataset = load_dataset("glue", "mrpc", split="train")

tokenizer = AutoTokenizer.from_pretrained("sentence-transformers/all-MiniLM-L6-v2")

model = AutoModel.from_pretrained("sentence-transformers/all-MiniLM-L6-v2")

model.to("cpu")

def cls_pooling(model_output):

return model_output.last_hidden_state[:, 0]

def generate_embeddings_batched(examples):

sentences_batch = list(examples['sentence1'])

encoded_input = tokenizer(

sentences_batch, padding=True, truncation=True, return_tensors="pt"

)

encoded_input = {k: v.to("cpu") for k, v in encoded_input.items()}

model_output = model(**encoded_input)

embeddings = cls_pooling(model_output)

examples['embeddings'] = embeddings.detach().cpu().numpy() # 64, 384

return examples

embeddings_dataset = dataset.map(

generate_embeddings_batched,

batched=True,

batch_size=10,

num_proc=NUMBER_OF_PROCESSES

)

```

While debugging it I've seen that it gets "stuck" when calling `torch.nn.Embedding.forward` but some testing shows that the same happens with other functions from `torch.nn`.

## Environment info

- Platform: Linux-5.14.0-1052-oem-x86_64-with-glibc2.31

- Python version: 3.9.14

- PyArrow version: 9.0.0

- Pandas version: 1.5.0

Not sure if this is a HF problem, a PyTorch problem or something I'm doing wrong..

Thanks!

| {

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/mariosasko",

"id": 47462742,

"login": "mariosasko",

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"type": "User",

"url": "https://api.github.com/users/mariosasko",

"user_view_type": "public"

} | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5094/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5094/timeline | null | completed | null | null | 6,889.638056 | 2,522 |

https://api.github.com/repos/huggingface/datasets/issues/5093 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5093/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5093/comments | https://api.github.com/repos/huggingface/datasets/issues/5093/events | https://github.com/huggingface/datasets/issues/5093 | 1,402,939,660 | I_kwDODunzps5TnykM | 5,093 | Mismatch between tutoriel and doc | {

"avatar_url": "https://avatars.githubusercontent.com/u/22726840?v=4",

"events_url": "https://api.github.com/users/clefourrier/events{/privacy}",

"followers_url": "https://api.github.com/users/clefourrier/followers",

"following_url": "https://api.github.com/users/clefourrier/following{/other_user}",

"gists_url": "https://api.github.com/users/clefourrier/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/clefourrier",

"id": 22726840,

"login": "clefourrier",

"node_id": "MDQ6VXNlcjIyNzI2ODQw",

"organizations_url": "https://api.github.com/users/clefourrier/orgs",

"received_events_url": "https://api.github.com/users/clefourrier/received_events",

"repos_url": "https://api.github.com/users/clefourrier/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/clefourrier/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/clefourrier/subscriptions",

"type": "User",

"url": "https://api.github.com/users/clefourrier",

"user_view_type": "public"

} | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

},

{

"color": "7057ff",

"default": true,

"descript... | closed | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/9295277?v=4",

"events_url": "https://api.github.com/users/riccardobucco/events{/privacy}",

"followers_url": "https://api.github.com/users/riccardobucco/followers",

"following_url": "https://api.github.com/users/riccardobucco/following{/other_user}",

"gists_url": "https://api.github.com/users/riccardobucco/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/riccardobucco",

"id": 9295277,

"login": "riccardobucco",

"node_id": "MDQ6VXNlcjkyOTUyNzc=",

"organizations_url": "https://api.github.com/users/riccardobucco/orgs",

"received_events_url": "https://api.github.com/users/riccardobucco/received_events",

"repos_url": "https://api.github.com/users/riccardobucco/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/riccardobucco/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/riccardobucco/subscriptions",

"type": "User",

"url": "https://api.github.com/users/riccardobucco",

"user_view_type": "public"

} | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/9295277?v=4",

"events_url": "https://api.github.com/users/riccardobucco/events{/privacy}",

"followers_url": "https://api.github.com/users/riccardobucco/followers",

"following_url": "https://api.github.com/users/riccardobucco/following{/other_u... | null | [

"Hi, thanks for reporting! This line should be replaced with \r\n```python\r\ndataset = dataset.map(lambda examples: tokenizer(examples[\"text\"], return_tensors=\"np\"), batched=True)\r\n```\r\nfor it to work (the `return_tensors` part inside the `tokenizer` call).",

"Can I work on this?",

"Fixed in https://gi... | 2022-10-10T10:23:53Z | 2022-10-10T17:51:15Z | 2022-10-10T17:51:14Z | MEMBER | null | null | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | ## Describe the bug

In the "Process text data" tutorial, [`map` has `return_tensors` as kwarg](https://huggingface.co/docs/datasets/main/en/nlp_process#map). It does not seem to appear in the [function documentation](https://huggingface.co/docs/datasets/main/en/package_reference/main_classes#datasets.Dataset.map), nor to work.

## Steps to reproduce the bug

MWE:

```python

from transformers import AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained("bert-base-cased")

from datasets import load_dataset

dataset = load_dataset("lhoestq/demo1", split="train")

dataset = dataset.map(lambda examples: tokenizer(examples["review"]), batched=True, return_tensors="pt")

```

## Expected results

return_tensors to be a valid kwarg :smiley:

## Actual results

```python

>> TypeError: map() got an unexpected keyword argument 'return_tensors'

```

## Environment info

- `datasets` version: 2.3.2

- Platform: Linux-5.14.0-1052-oem-x86_64-with-glibc2.29

- Python version: 3.8.10

- PyArrow version: 8.0.0

- Pandas version: 1.4.3

| {

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/mariosasko",

"id": 47462742,

"login": "mariosasko",

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"type": "User",

"url": "https://api.github.com/users/mariosasko",

"user_view_type": "public"

} | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5093/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5093/timeline | null | completed | null | null | 7.455833 | 2,523 |

https://api.github.com/repos/huggingface/datasets/issues/5090 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5090/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5090/comments | https://api.github.com/repos/huggingface/datasets/issues/5090/events | https://github.com/huggingface/datasets/issues/5090 | 1,401,102,407 | I_kwDODunzps5TgyBH | 5,090 | Review sync issues from GitHub to Hub | {

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova",

"user_view_type": "public"

} | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] | closed | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova",

"user_view_type": "public"

} | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/o... | null | [

"Nice!!"

] | 2022-10-07T12:31:56Z | 2022-10-08T07:07:36Z | 2022-10-08T07:07:36Z | MEMBER | null | null | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | ## Describe the bug

We have discovered that sometimes there were sync issues between GitHub and Hub datasets, after a merge commit to main branch.

For example:

- this merge commit: https://github.com/huggingface/datasets/commit/d74a9e8e4bfff1fed03a4cab99180a841d7caf4b

- was not properly synced with the Hub: https://github.com/huggingface/datasets/actions/runs/3002495269/jobs/4819769684

```

[main 9e641de] Add Papers with Code ID to scifact dataset (#4941)

Author: Albert Villanova del Moral <albertvillanova@users.noreply.huggingface.co>

1 file changed, 42 insertions(+), 14 deletions(-)

push failed !

GitCommandError(['git', 'push'], 1, b'remote: ---------------------------------------------------------- \nremote: Sorry, your push was rejected during YAML metadata verification: \nremote: - Error: "license" does not match any of the allowed types \nremote: ---------------------------------------------------------- \nremote: Please find the documentation at: \nremote: https://huggingface.co/docs/hub/models-cards#model-card-metadata \nremote: ---------------------------------------------------------- \nTo [https://huggingface.co/datasets/scifact.git\n](https://huggingface.co/datasets/scifact.git/n) ! [remote rejected] main -> main (pre-receive hook declined)\nerror: failed to push some refs to \'[https://huggingface.co/datasets/scifact.git\](https://huggingface.co/datasets/scifact.git/)'', b'')

```

We are reviewing sync issues in previous commits to recover them and repushing to the Hub.

TODO: Review

- [x] #4941

- scifact

- [x] #4931

- scifact

- [x] #4753

- wikipedia

- [x] #4554

- wmt17, wmt19, wmt_t2t

- Fixed with "Release 2.4.0" commit: https://github.com/huggingface/datasets/commit/401d4c4f9b9594cb6527c599c0e7a72ce1a0ea49

- https://huggingface.co/datasets/wmt17/commit/5c0afa83fbbd3508ff7627c07f1b27756d1379ea

- https://huggingface.co/datasets/wmt19/commit/b8ad5bf1960208a376a0ab20bc8eac9638f7b400

- https://huggingface.co/datasets/wmt_t2t/commit/b6d67191804dd0933476fede36754a436b48d1fc

- [x] #4607

- [x] #4416

- lccc

- Fixed with "Release 2.3.0" commit: https://huggingface.co/datasets/lccc/commit/8b1f8cf425b5653a0a4357a53205aac82ce038d1

- [x] #4367

| {

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova",

"user_view_type": "public"

} | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5090/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5090/timeline | null | completed | null | null | 18.594444 | 2,526 |

https://api.github.com/repos/huggingface/datasets/issues/5086 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5086/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5086/comments | https://api.github.com/repos/huggingface/datasets/issues/5086/events | https://github.com/huggingface/datasets/issues/5086 | 1,400,216,975 | I_kwDODunzps5TdZ2P | 5,086 | HTTPError: 404 Client Error: Not Found for url | {

"avatar_url": "https://avatars.githubusercontent.com/u/54015474?v=4",

"events_url": "https://api.github.com/users/keyuchen21/events{/privacy}",

"followers_url": "https://api.github.com/users/keyuchen21/followers",

"following_url": "https://api.github.com/users/keyuchen21/following{/other_user}",

"gists_url": "https://api.github.com/users/keyuchen21/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/keyuchen21",

"id": 54015474,

"login": "keyuchen21",

"node_id": "MDQ6VXNlcjU0MDE1NDc0",

"organizations_url": "https://api.github.com/users/keyuchen21/orgs",

"received_events_url": "https://api.github.com/users/keyuchen21/received_events",

"repos_url": "https://api.github.com/users/keyuchen21/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/keyuchen21/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/keyuchen21/subscriptions",

"type": "User",

"url": "https://api.github.com/users/keyuchen21",

"user_view_type": "public"

} | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] | closed | false | null | [] | null | [

"FYI @lewtun ",

"Hi @km5ar, thanks for reporting.\r\n\r\nThis should be fixed in the notebook:\r\n- the filename `datasets-issues-with-hf-doc-builder.jsonl` no longer exists on the repo; instead, current filename is `datasets-issues-with-comments.jsonl`\r\n- see: https://huggingface.co/datasets/lewtun/github-issu... | 2022-10-06T19:48:58Z | 2022-10-07T15:12:01Z | 2022-10-07T15:12:01Z | NONE | null | null | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | ## Describe the bug

I was following chap 5 from huggingface course: https://huggingface.co/course/chapter5/6?fw=tf

However, I'm not able to download the datasets, with a 404 erros

<img width="1160" alt="iShot2022-10-06_15 54 50" src="https://user-images.githubusercontent.com/54015474/194406327-ae62c2f3-1da5-4686-8631-13d879a0edee.png">

## Steps to reproduce the bug

```python

from huggingface_hub import hf_hub_url

data_files = hf_hub_url(

repo_id="lewtun/github-issues",

filename="datasets-issues-with-hf-doc-builder.jsonl",

repo_type="dataset",

)

from datasets import load_dataset

issues_dataset = load_dataset("json", data_files=data_files, split="train")

issues_dataset

```

## Environment info

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version: 2.5.2

- Platform: macOS-10.16-x86_64-i386-64bit

- Python version: 3.9.12

- PyArrow version: 9.0.0

- Pandas version: 1.4.4

| {

"avatar_url": "https://avatars.githubusercontent.com/u/26859204?v=4",

"events_url": "https://api.github.com/users/lewtun/events{/privacy}",

"followers_url": "https://api.github.com/users/lewtun/followers",

"following_url": "https://api.github.com/users/lewtun/following{/other_user}",

"gists_url": "https://api.github.com/users/lewtun/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lewtun",

"id": 26859204,

"login": "lewtun",

"node_id": "MDQ6VXNlcjI2ODU5MjA0",

"organizations_url": "https://api.github.com/users/lewtun/orgs",

"received_events_url": "https://api.github.com/users/lewtun/received_events",

"repos_url": "https://api.github.com/users/lewtun/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lewtun/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lewtun/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lewtun",

"user_view_type": "public"

} | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5086/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5086/timeline | null | completed | null | null | 19.384167 | 2,530 |

https://api.github.com/repos/huggingface/datasets/issues/5085 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5085/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5085/comments | https://api.github.com/repos/huggingface/datasets/issues/5085/events | https://github.com/huggingface/datasets/issues/5085 | 1,400,113,569 | I_kwDODunzps5TdAmh | 5,085 | Filtering on an empty dataset returns a corrupted dataset. | {

"avatar_url": "https://avatars.githubusercontent.com/u/36087158?v=4",

"events_url": "https://api.github.com/users/gabegma/events{/privacy}",

"followers_url": "https://api.github.com/users/gabegma/followers",

"following_url": "https://api.github.com/users/gabegma/following{/other_user}",

"gists_url": "https://api.github.com/users/gabegma/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/gabegma",

"id": 36087158,

"login": "gabegma",

"node_id": "MDQ6VXNlcjM2MDg3MTU4",

"organizations_url": "https://api.github.com/users/gabegma/orgs",

"received_events_url": "https://api.github.com/users/gabegma/received_events",

"repos_url": "https://api.github.com/users/gabegma/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/gabegma/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/gabegma/subscriptions",

"type": "User",

"url": "https://api.github.com/users/gabegma",

"user_view_type": "public"

} | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

},

{

"color": "DF8D62",

"default": false,

"descrip... | closed | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/23029765?v=4",

"events_url": "https://api.github.com/users/Mouhanedg56/events{/privacy}",

"followers_url": "https://api.github.com/users/Mouhanedg56/followers",

"following_url": "https://api.github.com/users/Mouhanedg56/following{/other_user}",

"gists_url": "https://api.github.com/users/Mouhanedg56/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/Mouhanedg56",

"id": 23029765,

"login": "Mouhanedg56",

"node_id": "MDQ6VXNlcjIzMDI5NzY1",

"organizations_url": "https://api.github.com/users/Mouhanedg56/orgs",

"received_events_url": "https://api.github.com/users/Mouhanedg56/received_events",

"repos_url": "https://api.github.com/users/Mouhanedg56/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/Mouhanedg56/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/Mouhanedg56/subscriptions",

"type": "User",

"url": "https://api.github.com/users/Mouhanedg56",

"user_view_type": "public"

} | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/23029765?v=4",

"events_url": "https://api.github.com/users/Mouhanedg56/events{/privacy}",

"followers_url": "https://api.github.com/users/Mouhanedg56/followers",

"following_url": "https://api.github.com/users/Mouhanedg56/following{/other_user}"... | null | [

"~~It seems like #5043 fix (merged recently) is the root cause of such behaviour. When we empty indices mapping (because the dataset length equals to zero), we can no longer get column item like: `ds_filter_2['sentence']` which uses\r\n`ds_filter_1._indices.column(0)`~~\r\n\r\n**UPDATE:**\r\nEmpty datasets are retu... | 2022-10-06T18:18:49Z | 2022-10-07T19:06:02Z | 2022-10-07T18:40:26Z | NONE | null | null | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | ## Describe the bug

When filtering a dataset twice, where the first result is an empty dataset, the second dataset seems corrupted.

## Steps to reproduce the bug

```python

datasets = load_dataset("glue", "sst2")

dataset_split = datasets['validation']

ds_filter_1 = dataset_split.filter(lambda x: False) # Some filtering condition that leads to an empty dataset

assert ds_filter_1.num_rows == 0

sentences = ds_filter_1['sentence']

assert len(sentences) == 0

ds_filter_2 = ds_filter_1.filter(lambda x: False) # Some other filtering condition

assert ds_filter_2.num_rows == 0

assert 'sentence' in ds_filter_2.column_names

sentences = ds_filter_2['sentence']

```

## Expected results

The last line should be returning an empty list, same as 4 lines above.

## Actual results

The last line currently raises `IndexError: index out of bounds`.

## Environment info

<!-- You can run the command `datasets-cli env` and copy-and-paste its output below. -->

- `datasets` version: 2.5.2

- Platform: macOS-11.6.6-x86_64-i386-64bit

- Python version: 3.9.11

- PyArrow version: 7.0.0

- Pandas version: 1.4.1

| {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lhoestq",

"user_view_type": "public"

} | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 3,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 3,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5085/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5085/timeline | null | completed | null | null | 24.360278 | 2,531 |

https://api.github.com/repos/huggingface/datasets/issues/5083 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5083/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5083/comments | https://api.github.com/repos/huggingface/datasets/issues/5083/events | https://github.com/huggingface/datasets/issues/5083 | 1,399,842,514 | I_kwDODunzps5Tb-bS | 5,083 | Support numpy/torch/tf/jax formatting for IterableDataset | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lhoestq",

"user_view_type": "public"

} | [

{

"color": "a2eeef",

"default": true,

"description": "New feature or request",

"id": 1935892871,

"name": "enhancement",

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement"

},

{

"color": "fef2c0",

"default": fals... | closed | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lhoestq",

"user_view_type": "public"

} | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists... | null | [

"hii @lhoestq, can you assign this issue to me? Though i am new to open source still I would love to put my best foot forward. I can see there isn't anyone right now assigned to this issue.",

"Hi @zutarich ! This issue was fixed by #5852 - sorry I forgot to close it\r\n\r\nFeel free to look for other issues and p... | 2022-10-06T15:14:58Z | 2023-10-09T12:42:15Z | 2023-10-09T12:42:15Z | MEMBER | null | null | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | Right now `IterableDataset` doesn't do any formatting.

In particular this code should return a numpy array:

```python

from datasets import load_dataset

ds = load_dataset("imagenet-1k", split="train", streaming=True).with_format("np")

print(next(iter(ds))["image"])

```

Right now it returns a PIL.Image.

Setting `streaming=False` does return a numpy array after #5072 | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lhoestq",

"user_view_type": "public"

} | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5083/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5083/timeline | null | completed | null | null | 8,829.454722 | 2,533 |

https://api.github.com/repos/huggingface/datasets/issues/5075 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5075/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5075/comments | https://api.github.com/repos/huggingface/datasets/issues/5075/events | https://github.com/huggingface/datasets/issues/5075 | 1,397,865,501 | I_kwDODunzps5TUbwd | 5,075 | Throw EnvironmentError when token is not present | {

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/mariosasko",

"id": 47462742,

"login": "mariosasko",

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"type": "User",

"url": "https://api.github.com/users/mariosasko",

"user_view_type": "public"

} | [

{

"color": "7057ff",

"default": true,

"description": "Good for newcomers",

"id": 1935892877,

"name": "good first issue",

"node_id": "MDU6TGFiZWwxOTM1ODkyODc3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/good%20first%20issue"

},

{

"color": "DF8D62",

"defa... | closed | false | null | [] | null | [

"@mariosasko I've raised a PR #5076 against this issue. Please help to review. Thanks."

] | 2022-10-05T14:14:18Z | 2022-10-07T14:33:28Z | 2022-10-07T14:33:28Z | COLLABORATOR | null | null | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | Throw EnvironmentError instead of OSError ([link](https://github.com/huggingface/datasets/blob/6ad430ba0cdeeb601170f732d4bd977f5c04594d/src/datasets/arrow_dataset.py#L4306) to the line) in `push_to_hub` when the Hub token is not present. | {

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/mariosasko",

"id": 47462742,

"login": "mariosasko",

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"type": "User",

"url": "https://api.github.com/users/mariosasko",

"user_view_type": "public"

} | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5075/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5075/timeline | null | completed | null | null | 48.319444 | 2,541 |

https://api.github.com/repos/huggingface/datasets/issues/5074 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5074/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5074/comments | https://api.github.com/repos/huggingface/datasets/issues/5074/events | https://github.com/huggingface/datasets/issues/5074 | 1,397,850,352 | I_kwDODunzps5TUYDw | 5,074 | Replace AssertionErrors with more meaningful errors | {

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/mariosasko",

"id": 47462742,

"login": "mariosasko",

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"type": "User",

"url": "https://api.github.com/users/mariosasko",

"user_view_type": "public"

} | [

{

"color": "7057ff",

"default": true,

"description": "Good for newcomers",

"id": 1935892877,

"name": "good first issue",

"node_id": "MDU6TGFiZWwxOTM1ODkyODc3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/good%20first%20issue"

},

{

"color": "DF8D62",

"defa... | closed | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/20004072?v=4",

"events_url": "https://api.github.com/users/galbwe/events{/privacy}",

"followers_url": "https://api.github.com/users/galbwe/followers",

"following_url": "https://api.github.com/users/galbwe/following{/other_user}",

"gists_url": "https://api.github.com/users/galbwe/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/galbwe",

"id": 20004072,

"login": "galbwe",

"node_id": "MDQ6VXNlcjIwMDA0MDcy",

"organizations_url": "https://api.github.com/users/galbwe/orgs",

"received_events_url": "https://api.github.com/users/galbwe/received_events",

"repos_url": "https://api.github.com/users/galbwe/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/galbwe/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/galbwe/subscriptions",

"type": "User",

"url": "https://api.github.com/users/galbwe",

"user_view_type": "public"

} | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/20004072?v=4",

"events_url": "https://api.github.com/users/galbwe/events{/privacy}",

"followers_url": "https://api.github.com/users/galbwe/followers",

"following_url": "https://api.github.com/users/galbwe/following{/other_user}",

"gists_ur... | null | [

"Hi, can I pick up this issue?",

"#self-assign",

"Looks like the top-level `datasource` directory was removed when https://github.com/huggingface/datasets/pull/4974 was merged, so there are 3 source files to fix."

] | 2022-10-05T14:03:55Z | 2022-10-07T14:33:11Z | 2022-10-07T14:33:11Z | COLLABORATOR | null | null | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | Replace the AssertionErrors with more meaningful errors such as ValueError, TypeError, etc.

The files with AssertionErrors that need to be replaced:

```

src/datasets/arrow_reader.py

src/datasets/builder.py

src/datasets/utils/version.py

``` | {

"avatar_url": "https://avatars.githubusercontent.com/u/47462742?v=4",

"events_url": "https://api.github.com/users/mariosasko/events{/privacy}",

"followers_url": "https://api.github.com/users/mariosasko/followers",

"following_url": "https://api.github.com/users/mariosasko/following{/other_user}",

"gists_url": "https://api.github.com/users/mariosasko/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/mariosasko",

"id": 47462742,

"login": "mariosasko",

"node_id": "MDQ6VXNlcjQ3NDYyNzQy",

"organizations_url": "https://api.github.com/users/mariosasko/orgs",

"received_events_url": "https://api.github.com/users/mariosasko/received_events",

"repos_url": "https://api.github.com/users/mariosasko/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/mariosasko/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/mariosasko/subscriptions",

"type": "User",

"url": "https://api.github.com/users/mariosasko",

"user_view_type": "public"

} | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5074/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5074/timeline | null | completed | null | null | 48.487778 | 2,542 |

https://api.github.com/repos/huggingface/datasets/issues/5070 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5070/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5070/comments | https://api.github.com/repos/huggingface/datasets/issues/5070/events | https://github.com/huggingface/datasets/issues/5070 | 1,396,765,647 | I_kwDODunzps5TQPPP | 5,070 | Support default config name when no builder configs | {

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova",

"user_view_type": "public"

} | [

{

"color": "a2eeef",

"default": true,

"description": "New feature or request",

"id": 1935892871,

"name": "enhancement",

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement"

}

] | closed | false | {

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova",

"user_view_type": "public"

} | [

{

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/o... | null | [

"Thank you for creating this feature request, Albert.\r\n\r\nFor context this is the datatest where Albert has been helping me to switch to on-the-fly split config https://huggingface.co/datasets/HuggingFaceM4/cm4-synthetic-testing\r\n\r\nand the attempt to switch on-the-fly splits was here: https://huggingface.co/... | 2022-10-04T19:49:35Z | 2022-10-06T14:40:26Z | 2022-10-06T14:40:26Z | MEMBER | null | null | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | **Is your feature request related to a problem? Please describe.**

As discussed with @stas00, we could support defining a default config name, even if no predefined allowed config names are set. That is, support `DEFAULT_CONFIG_NAME`, even when `BUILDER_CONFIGS` is not defined.

**Additional context**

In order to support creating configs on the fly **by name** (not using kwargs), the list of allowed builder configs `BUILDER_CONFIGS` must not be set.

However, if so, then `DEFAULT_CONFIG_NAME` is not supported.

| {

"avatar_url": "https://avatars.githubusercontent.com/u/8515462?v=4",

"events_url": "https://api.github.com/users/albertvillanova/events{/privacy}",

"followers_url": "https://api.github.com/users/albertvillanova/followers",

"following_url": "https://api.github.com/users/albertvillanova/following{/other_user}",

"gists_url": "https://api.github.com/users/albertvillanova/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/albertvillanova",

"id": 8515462,

"login": "albertvillanova",

"node_id": "MDQ6VXNlcjg1MTU0NjI=",

"organizations_url": "https://api.github.com/users/albertvillanova/orgs",

"received_events_url": "https://api.github.com/users/albertvillanova/received_events",

"repos_url": "https://api.github.com/users/albertvillanova/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/albertvillanova/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/albertvillanova/subscriptions",

"type": "User",

"url": "https://api.github.com/users/albertvillanova",

"user_view_type": "public"

} | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 1,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 1,

"url": "https://api.github.com/repos/huggingface/datasets/issues/5070/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/5070/timeline | null | completed | null | null | 42.8475 | 2,546 |

https://api.github.com/repos/huggingface/datasets/issues/5061 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/5061/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/5061/comments | https://api.github.com/repos/huggingface/datasets/issues/5061/events | https://github.com/huggingface/datasets/issues/5061 | 1,395,476,770 | I_kwDODunzps5TLUki | 5,061 | `_pickle.PicklingError: logger cannot be pickled` in multiprocessing `map` | {

"avatar_url": "https://avatars.githubusercontent.com/u/11954789?v=4",

"events_url": "https://api.github.com/users/ZhaofengWu/events{/privacy}",

"followers_url": "https://api.github.com/users/ZhaofengWu/followers",

"following_url": "https://api.github.com/users/ZhaofengWu/following{/other_user}",

"gists_url": "https://api.github.com/users/ZhaofengWu/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/ZhaofengWu",

"id": 11954789,

"login": "ZhaofengWu",

"node_id": "MDQ6VXNlcjExOTU0Nzg5",

"organizations_url": "https://api.github.com/users/ZhaofengWu/orgs",

"received_events_url": "https://api.github.com/users/ZhaofengWu/received_events",

"repos_url": "https://api.github.com/users/ZhaofengWu/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/ZhaofengWu/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/ZhaofengWu/subscriptions",

"type": "User",

"url": "https://api.github.com/users/ZhaofengWu",

"user_view_type": "public"

} | [

{

"color": "d73a4a",

"default": true,

"description": "Something isn't working",

"id": 1935892857,

"name": "bug",

"node_id": "MDU6TGFiZWwxOTM1ODkyODU3",

"url": "https://api.github.com/repos/huggingface/datasets/labels/bug"

}

] | closed | false | null | [] | null | [

"This is maybe related to python 3.10, do you think you could try on 3.8 ?\r\n\r\nIn the meantime we'll keep improving the support for 3.10. Let me add a dedicated CI",

"I did some binary search and seems like the root cause is either `multiprocess` or `dill`. python 3.10 is fine. Specifically:\r\n- `multiprocess... | 2022-10-03T23:51:38Z | 2023-07-21T14:43:35Z | 2023-07-21T14:43:34Z | NONE | null | null | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | ## Describe the bug

When I `map` with multiple processes, this error occurs. The `.name` of the `logger` that fails to pickle in the final line is `datasets.fingerprint`.

```

File "~/project/dataset.py", line 204, in <dictcomp>

split: dataset.map(

File ".../site-packages/datasets/arrow_dataset.py", line 2489, in map

transformed_shards[index] = async_result.get()

File ".../site-packages/multiprocess/pool.py", line 771, in get

raise self._value

File ".../site-packages/multiprocess/pool.py", line 537, in _handle_tasks

put(task)

File ".../site-packages/multiprocess/connection.py", line 214, in send

self._send_bytes(_ForkingPickler.dumps(obj))

File ".../site-packages/multiprocess/reduction.py", line 54, in dumps

cls(buf, protocol, *args, **kwds).dump(obj)

File ".../site-packages/dill/_dill.py", line 620, in dump

StockPickler.dump(self, obj)

File ".../pickle.py", line 487, in dump

self.save(obj)

File ".../pickle.py", line 560, in save

f(self, obj) # Call unbound method with explicit self

File ".../pickle.py", line 902, in save_tuple

save(element)

File ".../pickle.py", line 560, in save