title stringlengths 1 290 | body stringlengths 0 228k ⌀ | html_url stringlengths 46 51 | comments list | pull_request dict | number int64 1 5.59k | is_pull_request bool 2

classes |

|---|---|---|---|---|---|---|

Fail to process SQuADv1.1 datasets with max_seq_length=128, doc_stride=96. | ## Describe the bug

datasets fail to process SQuADv1.1 with max_seq_length=128, doc_stride=96 when calling datasets["train"].train_dataset.map().

## Steps to reproduce the bug

I used huggingface[ TF2 question-answering examples](https://github.com/huggingface/transformers/tree/main/examples/tensorflow/question-a... | https://github.com/huggingface/datasets/issues/4769 | [] | null | 4,769 | false |

Unpin rouge_score test dependency | Once `rouge-score` has made the 0.1.2 release to fix their issue https://github.com/google-research/google-research/issues/1212, we can unpin it.

Related to:

- #4735 | https://github.com/huggingface/datasets/pull/4768 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4768",

"html_url": "https://github.com/huggingface/datasets/pull/4768",

"diff_url": "https://github.com/huggingface/datasets/pull/4768.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4768.patch",

"merged_at": "2022-07-29T16:29... | 4,768 | true |

Add 2.4.0 version added to docstrings | null | https://github.com/huggingface/datasets/pull/4767 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4767",

"html_url": "https://github.com/huggingface/datasets/pull/4767",

"diff_url": "https://github.com/huggingface/datasets/pull/4767.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4767.patch",

"merged_at": "2022-07-29T11:03... | 4,767 | true |

Dataset Viewer issue for openclimatefix/goes-mrms | ### Link

_No response_

### Description

_No response_

### Owner

_No response_ | https://github.com/huggingface/datasets/issues/4766 | [

"Thanks for reporting, @cheaterHy.\r\n\r\nThe cause of this issue is a misalignment between the names of the repo (`goes-mrms`, with hyphen) and its Python loading scrip file (`goes_mrms.py`, with underscore).\r\n\r\nI've opened an Issue discussion in their repo: https://huggingface.co/datasets/openclimatefix/goes-... | null | 4,766 | false |

Fix version in map_nested docstring | After latest release, `map_nested` docstring needs being updated with the right version for versionchanged and versionadded. | https://github.com/huggingface/datasets/pull/4765 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4765",

"html_url": "https://github.com/huggingface/datasets/pull/4765",

"diff_url": "https://github.com/huggingface/datasets/pull/4765.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4765.patch",

"merged_at": "2022-07-29T11:38... | 4,765 | true |

Update CI badge | Replace the old CircleCI badge with a new one for GH Actions. | https://github.com/huggingface/datasets/pull/4764 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4764",

"html_url": "https://github.com/huggingface/datasets/pull/4764",

"diff_url": "https://github.com/huggingface/datasets/pull/4764.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4764.patch",

"merged_at": "2022-07-29T11:23... | 4,764 | true |

More rigorous shape inference in to_tf_dataset | `tf.data` needs to know the shape of tensors emitted from a `tf.data.Dataset`. Although `None` dimensions are possible, overusing them can cause problems - Keras uses the dataset tensor spec at compile-time, and so saying that a dimension is `None` when it's actually constant can hurt performance, or even cause trainin... | https://github.com/huggingface/datasets/pull/4763 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4763",

"html_url": "https://github.com/huggingface/datasets/pull/4763",

"diff_url": "https://github.com/huggingface/datasets/pull/4763.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4763.patch",

"merged_at": "2022-09-08T19:15... | 4,763 | true |

Improve features resolution in streaming | `IterableDataset._resolve_features` was returning the features sorted alphabetically by column name, which is not consistent with non-streaming. I changed this and used the order of columns from the data themselves. It was causing some inconsistencies in the dataset viewer as well.

I also fixed `interleave_datasets`... | https://github.com/huggingface/datasets/pull/4762 | [

"_The documentation is not available anymore as the PR was closed or merged._",

"Just took your comment into account @mariosasko , let me know if it's good for you now :)"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4762",

"html_url": "https://github.com/huggingface/datasets/pull/4762",

"diff_url": "https://github.com/huggingface/datasets/pull/4762.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4762.patch",

"merged_at": "2022-09-09T17:15... | 4,762 | true |

parallel searching in multi-gpu setting using faiss | While I notice that `add_faiss_index` has supported assigning multiple GPUs, I am still confused about how it works.

Does the `search-batch` function automatically parallelizes the input queries to different gpus?https://github.com/huggingface/datasets/blob/d76599bdd4d186b2e7c4f468b05766016055a0a5/src/datasets/sea... | https://github.com/huggingface/datasets/issues/4761 | [

"And I don't see any speed up when increasing the number of GPUs while calling `get_nearest_examples_batch`.",

"Hi ! Yes search_batch uses FAISS search which happens in parallel across the GPUs\r\n\r\n> And I don't see any speed up when increasing the number of GPUs while calling get_nearest_examples_batch.\r\n\r... | null | 4,761 | false |

Issue with offline mode | ## Describe the bug

I can't retrieve a cached dataset with offline mode enabled

## Steps to reproduce the bug

To reproduce my issue, first, you'll need to run a script that will cache the dataset

```python

import os

os.environ["HF_DATASETS_OFFLINE"] = "0"

import datasets

datasets.logging.set_verbosity_i... | https://github.com/huggingface/datasets/issues/4760 | [

"Hi @SaulLu, thanks for reporting.\r\n\r\nI think offline mode is not supported for datasets containing only data files (without any loading script). I'm having a look into this...",

"Thanks for your feedback! \r\n\r\nTo give you a little more info, if you don't set the offline mode flag, the script will load the... | null | 4,760 | false |

Dataset Viewer issue for Toygar/turkish-offensive-language-detection | ### Link

https://huggingface.co/datasets/Toygar/turkish-offensive-language-detection

### Description

Status code: 400

Exception: Status400Error

Message: The dataset does not exist.

Hi, I provided train.csv, test.csv and valid.csv files. However, viewer says dataset does not exist.

Should I n... | https://github.com/huggingface/datasets/issues/4759 | [

"I refreshed the dataset viewer manually, it's fixed now. Sorry for the inconvenience.\r\n<img width=\"1557\" alt=\"Capture d’écran 2022-07-28 à 09 17 39\" src=\"https://user-images.githubusercontent.com/1676121/181514666-92d7f8e1-ddc1-4769-84f3-f1edfdb902e8.png\">\r\n\r\n"

] | null | 4,759 | false |

Document better when relative paths are transformed to URLs | As discussed with @ydshieh, when passing a relative path as `data_dir` to `load_dataset` of a dataset hosted on the Hub, the relative path is transformed to the corresponding URL of the Hub dataset.

Currently, we mention this in our docs here: [Create a dataset loading script > Download data files and organize split... | https://github.com/huggingface/datasets/issues/4757 | [] | null | 4,757 | false |

Datasets.map causes incorrect overflow_to_sample_mapping when used with tokenizers and small batch size | ## Describe the bug

When using `tokenizer`, we can retrieve the field `overflow_to_sample_mapping`, since long samples will be overflown into multiple token sequences.

However, when tokenizing is done via `Dataset.map`, with `n_proc > 1`, the `overflow_to_sample_mapping` field is wrong. This seems to be because ea... | https://github.com/huggingface/datasets/issues/4755 | [

"I've built a minimal example that shows this bug without `n_proc`. It seems like it's a problem any way of using **tokenizers, `overflow_to_sample_mapping`, and Dataset.map, with a small batch size**:\r\n\r\n```\r\nimport datasets\r\nimport transformers\r\npretrained = 'deepset/tinyroberta-squad2'\r\ntokenizer = t... | null | 4,755 | false |

Remove "unkown" language tags | Following https://github.com/huggingface/datasets/pull/4753 there was still a "unknown" langauge tag in `wikipedia` so the job at https://github.com/huggingface/datasets/runs/7542567336?check_suite_focus=true failed for wikipedia | https://github.com/huggingface/datasets/pull/4754 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4754",

"html_url": "https://github.com/huggingface/datasets/pull/4754",

"diff_url": "https://github.com/huggingface/datasets/pull/4754.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4754.patch",

"merged_at": "2022-07-27T14:51... | 4,754 | true |

Add `language_bcp47` tag | Following (internal) https://github.com/huggingface/moon-landing/pull/3509, we need to move the bcp47 tags to `language_bcp47` and keep the `language` tag for iso 639 1-2-3 codes. In particular I made sure that all the tags in `languages` are not longer than 3 characters. I moved the rest to `language_bcp47` and fixed ... | https://github.com/huggingface/datasets/pull/4753 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4753",

"html_url": "https://github.com/huggingface/datasets/pull/4753",

"diff_url": "https://github.com/huggingface/datasets/pull/4753.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4753.patch",

"merged_at": "2022-07-27T14:37... | 4,753 | true |

DatasetInfo issue when testing multiple configs: mixed task_templates | ## Describe the bug

When running the `datasets-cli test` it would seem that some config properties in a DatasetInfo get mangled, leading to issues, e.g., about the ClassLabel.

## Steps to reproduce the bug

In summary, what I want to do is create three configs:

- unfiltered: no classlabel, no tasks. Gets data fr... | https://github.com/huggingface/datasets/issues/4752 | [

"I've narrowed down the issue to the `dataset_module_factory` which already creates a `dataset_infos.json` file down in the `.cache/modules/dataset_modules/..` folder. That JSON file already contains the wrong task_templates for `unfiltered`.",

"Ugh. Found the issue: apparently `datasets` was reusing the already ... | null | 4,752 | false |

Added dataset information in clinic oos dataset card | This PR aims to add relevant information like the Description, Language and citation information of the clinic oos dataset card. | https://github.com/huggingface/datasets/pull/4751 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4751",

"html_url": "https://github.com/huggingface/datasets/pull/4751",

"diff_url": "https://github.com/huggingface/datasets/pull/4751.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4751.patch",

"merged_at": "2022-07-28T10:40... | 4,751 | true |

Easily create loading script for benchmark comprising multiple huggingface datasets | Hi,

I would like to create a loading script for a benchmark comprising multiple huggingface datasets.

The function _split_generators needs to return the files for the respective dataset. However, the files are not always in the same location for each dataset. I want to just make a wrapper dataset that provides a si... | https://github.com/huggingface/datasets/issues/4750 | [

"Hi ! I think the simplest is to copy paste the `_split_generators` code from the other datasets and do a bunch of if-else, as in the glue dataset: https://huggingface.co/datasets/glue/blob/main/glue.py#L467",

"Ok, I see. Thank you"

] | null | 4,750 | false |

Add image classification processing guide | This PR follows up on #4710 to separate the object detection and image classification guides. It expands a little more on the original guide to include a more complete example of loading and transforming a whole dataset. | https://github.com/huggingface/datasets/pull/4748 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4748",

"html_url": "https://github.com/huggingface/datasets/pull/4748",

"diff_url": "https://github.com/huggingface/datasets/pull/4748.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4748.patch",

"merged_at": "2022-07-27T17:16... | 4,748 | true |

Shard parquet in `download_and_prepare` | Following https://github.com/huggingface/datasets/pull/4724 (needs to be merged first)

It's good practice to shard parquet files to enable parallelism with spark/dask/etc.

I added the `max_shard_size` parameter to `download_and_prepare` (default to 500MB for parquet, and None for arrow).

```python

from datase... | https://github.com/huggingface/datasets/pull/4747 | [

"_The documentation is not available anymore as the PR was closed or merged._",

"This is ready for review cc @mariosasko :) please let me know what you think !"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4747",

"html_url": "https://github.com/huggingface/datasets/pull/4747",

"diff_url": "https://github.com/huggingface/datasets/pull/4747.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4747.patch",

"merged_at": "2022-09-15T13:41... | 4,747 | true |

Dataset Viewer issue for yanekyuk/wikikey | ### Link

_No response_

### Description

_No response_

### Owner

_No response_ | https://github.com/huggingface/datasets/issues/4746 | [

"The dataset is empty, as far as I can tell: there are no files in the repository at https://huggingface.co/datasets/yanekyuk/wikikey/tree/main\r\n\r\nMaybe the viewer can display a better message for empty datasets",

"OK. Closing as it's not an error. We will work on making the error message a lot clearer."

] | null | 4,746 | false |

Allow `list_datasets` to include private datasets | I am working with a large collection of private datasets, it would be convenient for me to be able to list them.

I would envision extending the convention of using `use_auth_token` keyword argument to `list_datasets` function, then calling:

```

list_datasets(use_auth_token="my_token")

```

would return the li... | https://github.com/huggingface/datasets/issues/4745 | [

"Thanks for opening this issue :)\r\n\r\nIf it can help, I think you can already use `huggingface_hub` to achieve this:\r\n```python\r\n>>> from huggingface_hub import HfApi\r\n>>> [ds_info.id for ds_info in HfApi().list_datasets(use_auth_token=token) if ds_info.private]\r\n['bigscience/xxxx', 'bigscience-catalogue... | null | 4,745 | false |

Remove instructions to generate dummy data from our docs | In our docs, we indicate to generate the dummy data: https://huggingface.co/docs/datasets/dataset_script#testing-data-and-checksum-metadata

However:

- dummy data makes sense only for datasets in our GitHub repo: so that we can test their loading with our CI

- for datasets on the Hub:

- they do not pass any CI t... | https://github.com/huggingface/datasets/issues/4744 | [

"Note that for me personally, conceptually all the dummy data (even for \"canonical\" datasets) should be superseded by `datasets-server`, which performs some kind of CI/CD of datasets (including the canonical ones)",

"I totally agree: next step should be rethinking if dummy data makes sense for canonical dataset... | null | 4,744 | false |

Update map docs | This PR updates the `map` docs for processing text to include `return_tensors="np"` to make it run faster (see #4676). | https://github.com/huggingface/datasets/pull/4743 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4743",

"html_url": "https://github.com/huggingface/datasets/pull/4743",

"diff_url": "https://github.com/huggingface/datasets/pull/4743.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4743.patch",

"merged_at": "2022-07-27T16:10... | 4,743 | true |

Dummy data nowhere to be found | ## Describe the bug

To finalize my dataset, I wanted to create dummy data as per the guide and I ran

```shell

datasets-cli dummy_data datasets/hebban-reviews --auto_generate

```

where hebban-reviews is [this repo](https://huggingface.co/datasets/BramVanroy/hebban-reviews). And even though the scripts runs an... | https://github.com/huggingface/datasets/issues/4742 | [

"Hi @BramVanroy, thanks for reporting.\r\n\r\nFirst of all, please note that you do not need the dummy data: this was the case when we were adding datasets to the `datasets` library (on this GitHub repo), so that we could test the correct loading of all datasets with our CI. However, this is no longer the case for ... | null | 4,742 | false |

Fix to dict conversion of `DatasetInfo`/`Features` | Fix #4681 | https://github.com/huggingface/datasets/pull/4741 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4741",

"html_url": "https://github.com/huggingface/datasets/pull/4741",

"diff_url": "https://github.com/huggingface/datasets/pull/4741.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4741.patch",

"merged_at": "2022-07-25T12:37... | 4,741 | true |

Fix multiprocessing in map_nested | As previously discussed:

Before, multiprocessing was not used in `map_nested` if `num_proc` was greater than or equal to `len(iterable)`.

- Multiprocessing was not used e.g. when passing `num_proc=20` but having 19 files to download

- As by default, `DownloadManager` sets `num_proc=16`, before multiprocessing was ... | https://github.com/huggingface/datasets/pull/4740 | [

"_The documentation is not available anymore as the PR was closed or merged._",

"@lhoestq as a workaround to preserve previous behavior, the parameter `multiprocessing_min_length=16` is passed from `download` to `map_nested`, so that multiprocessing is only used if at least 16 files to be downloaded.\r\n\r\nNote ... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4740",

"html_url": "https://github.com/huggingface/datasets/pull/4740",

"diff_url": "https://github.com/huggingface/datasets/pull/4740.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4740.patch",

"merged_at": "2022-07-28T10:40... | 4,740 | true |

Deprecate metrics | Deprecate metrics:

- deprecate public functions: `load_metric`, `list_metrics` and `inspect_metric`: docstring and warning

- test deprecation warnings are issues

- deprecate metrics in all docs

- remove mentions to metrics in docs and README

- deprecate internal functions/classes

Maybe we should also stop testi... | https://github.com/huggingface/datasets/pull/4739 | [

"_The documentation is not available anymore as the PR was closed or merged._",

"I mark this as Draft because the deprecated version number needs being updated after the latest release.",

"Perhaps now is the time to also update the `inspect_metric` from `evaluate` with the changes introduced in https://github.c... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4739",

"html_url": "https://github.com/huggingface/datasets/pull/4739",

"diff_url": "https://github.com/huggingface/datasets/pull/4739.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4739.patch",

"merged_at": "2022-07-28T11:32... | 4,739 | true |

Use CI unit/integration tests | This PR:

- Implements separate unit/integration tests

- A fail in integration tests does not cancel the rest of the jobs

- We should implement more robust integration tests: work in progress in a subsequent PR

- For the moment, test involving network requests are marked as integration: to be evolved | https://github.com/huggingface/datasets/pull/4738 | [

"_The documentation is not available anymore as the PR was closed or merged._",

"I think this PR can be merged. Willing to see it in action.\r\n\r\nCC: @lhoestq "

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4738",

"html_url": "https://github.com/huggingface/datasets/pull/4738",

"diff_url": "https://github.com/huggingface/datasets/pull/4738.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4738.patch",

"merged_at": "2022-07-26T20:07... | 4,738 | true |

Download error on scene_parse_150 | ```

from datasets import load_dataset

dataset = load_dataset("scene_parse_150", "scene_parsing")

FileNotFoundError: Couldn't find file at http://data.csail.mit.edu/places/ADEchallenge/ADEChallengeData2016.zip

```

| https://github.com/huggingface/datasets/issues/4737 | [

"Hi! The server with the data seems to be down. I've reported this issue (https://github.com/CSAILVision/sceneparsing/issues/34) in the dataset repo. ",

"The URL seems to work now, and therefore the script as well."

] | null | 4,737 | false |

Dataset Viewer issue for deepklarity/huggingface-spaces-dataset | ### Link

https://huggingface.co/datasets/deepklarity/huggingface-spaces-dataset/viewer/deepklarity--huggingface-spaces-dataset/train

### Description

Hi Team,

I'm getting the following error on a uploaded dataset. I'm getting the same status for a couple of hours now. The dataset size is `<1MB` and the format is cs... | https://github.com/huggingface/datasets/issues/4736 | [

"Thanks for reporting. You're right, workers were under-provisioned due to a manual error, and the job queue was full. It's fixed now."

] | null | 4,736 | false |

Pin rouge_score test dependency | Temporarily pin `rouge_score` (to avoid latest version 0.7.0) until the issue is fixed.

Fix #4734 | https://github.com/huggingface/datasets/pull/4735 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4735",

"html_url": "https://github.com/huggingface/datasets/pull/4735",

"diff_url": "https://github.com/huggingface/datasets/pull/4735.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4735.patch",

"merged_at": "2022-07-22T07:45... | 4,735 | true |

Package rouge-score cannot be imported | ## Describe the bug

After the today release of `rouge_score-0.0.7` it seems no longer importable. Our CI fails: https://github.com/huggingface/datasets/runs/7463218591?check_suite_focus=true

```

FAILED tests/test_dataset_common.py::LocalDatasetTest::test_builder_class_bigbench

FAILED tests/test_dataset_common.py::L... | https://github.com/huggingface/datasets/issues/4734 | [

"We have added a comment on an existing issue opened in their repo: https://github.com/google-research/google-research/issues/1212#issuecomment-1192267130\r\n- https://github.com/google-research/google-research/issues/1212"

] | null | 4,734 | false |

rouge metric | ## Describe the bug

A clear and concise description of what the bug is.

Loading Rouge metric gives error after latest rouge-score==0.0.7 release.

Downgrading rougemetric==0.0.4 works fine.

## Steps to reproduce the bug

```python

# Sample code to reproduce the bug

```

## Expected results

A clear and concis... | https://github.com/huggingface/datasets/issues/4733 | [

"Fixed by:\r\n- #4735"

] | null | 4,733 | false |

Document better that loading a dataset passing its name does not use the local script | As reported by @TrentBrick here https://github.com/huggingface/datasets/issues/4725#issuecomment-1191858596, it could be more clear that loading a dataset by passing its name does not use the (modified) local script of it.

What he did:

- he installed `datasets` from source

- he modified locally `datasets/the_pile/... | https://github.com/huggingface/datasets/issues/4732 | [

"Thanks for the feedback!\r\n\r\nI think since this issue is closely related to loading, I can add a clearer explanation under [Load > local loading script](https://huggingface.co/docs/datasets/main/en/loading#local-loading-script).",

"That makes sense but I think having a line about it under https://huggingface.... | null | 4,732 | false |

docs: ✏️ fix TranslationVariableLanguages example | null | https://github.com/huggingface/datasets/pull/4731 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4731",

"html_url": "https://github.com/huggingface/datasets/pull/4731",

"diff_url": "https://github.com/huggingface/datasets/pull/4731.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4731.patch",

"merged_at": "2022-07-22T06:48... | 4,731 | true |

Loading imagenet-1k validation split takes much more RAM than expected | ## Describe the bug

Loading into memory the validation split of imagenet-1k takes much more RAM than expected. Assuming ImageNet-1k is 150 GB, split is 50000 validation images and 1,281,167 train images, I would expect only about 6 GB loaded in RAM.

## Steps to reproduce the bug

```python

from datasets import... | https://github.com/huggingface/datasets/issues/4730 | [

"My bad, `482 * 418 * 50000 * 3 / 1000000 = 30221 MB` ( https://stackoverflow.com/a/42979315 ).\r\n\r\nMeanwhile `256 * 256 * 50000 * 3 / 1000000 = 9830 MB`. We are loading the non-cropped images and that is why we take so much RAM."

] | null | 4,730 | false |

Refactor Hub tests | This PR refactors `test_upstream_hub` by removing unittests and using the following pytest Hub fixtures:

- `ci_hub_config`

- `set_ci_hub_access_token`: to replace setUp/tearDown

- `temporary_repo` context manager: to replace `try... finally`

- `cleanup_repo`: to delete repo accidentally created if one of the tests ... | https://github.com/huggingface/datasets/pull/4729 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4729",

"html_url": "https://github.com/huggingface/datasets/pull/4729",

"diff_url": "https://github.com/huggingface/datasets/pull/4729.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4729.patch",

"merged_at": "2022-07-22T14:56... | 4,729 | true |

load_dataset gives "403" error when using Financial Phrasebank | I tried both codes below to download the financial phrasebank dataset (https://huggingface.co/datasets/financial_phrasebank) with the sentences_allagree subset. However, the code gives a 403 error when executed from multiple machines locally or on the cloud.

```

from datasets import load_dataset, DownloadMode

load... | https://github.com/huggingface/datasets/issues/4728 | [

"Hi @rohitvincent, thanks for reporting.\r\n\r\nUnfortunately I'm not able to reproduce your issue:\r\n```python\r\nIn [2]: from datasets import load_dataset, DownloadMode\r\n ...: load_dataset(path='financial_phrasebank',name='sentences_allagree', download_mode=\"force_redownload\")\r\nDownloading builder script... | null | 4,728 | false |

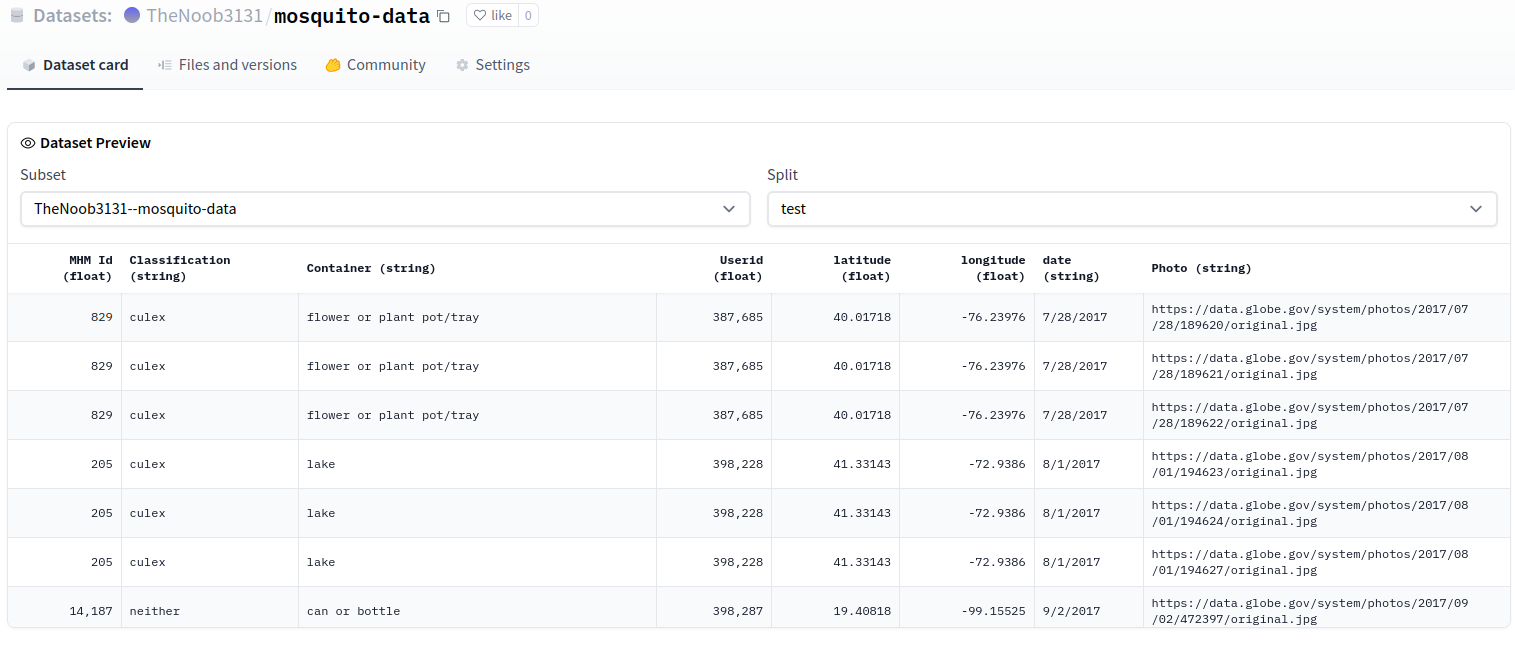

Dataset Viewer issue for TheNoob3131/mosquito-data | ### Link

https://huggingface.co/datasets/TheNoob3131/mosquito-data/viewer/TheNoob3131--mosquito-data/test

### Description

Dataset preview not showing with large files. Says 'split cache is empty' even though there are train and test splits.

### Owner

_No response_ | https://github.com/huggingface/datasets/issues/4727 | [

"The preview is working OK:\r\n\r\n\r\n\r\n"

] | null | 4,727 | false |

Fix broken link to the Hub | The Markdown link fails to render if it is in the same line as the `<span>`. This PR implements @mishig25's fix by using `<a href=" ">` instead.

| https://github.com/huggingface/datasets/pull/4726 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4726",

"html_url": "https://github.com/huggingface/datasets/pull/4726",

"diff_url": "https://github.com/huggingface/datasets/pull/4726.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4726.patch",

"merged_at": "2022-07-21T08:00... | 4,726 | true |

the_pile datasets URL broken. | https://github.com/huggingface/datasets/pull/3627 changed the Eleuther AI Pile dataset URL from https://the-eye.eu/ to https://mystic.the-eye.eu/ but the latter is now broken and the former works again.

Note that when I git clone the repo and use `pip install -e .` and then edit the URL back the codebase doesn't se... | https://github.com/huggingface/datasets/issues/4725 | [

"Thanks for reporting, @TrentBrick. We are addressing the change with their data host server.\r\n\r\nOn the meantime, if you would like to work with your fixed local copy of the_pile script, you should use:\r\n```python\r\nload_dataset(\"path/to/your/local/the_pile/the_pile.py\",...\r\n```\r\ninstead of just `load_... | null | 4,725 | false |

Download and prepare as Parquet for cloud storage | Download a dataset as Parquet in a cloud storage can be useful for streaming mode and to use with spark/dask/ray.

This PR adds support for `fsspec` URIs like `s3://...`, `gcs://...` etc. and ads the `file_format` to save as parquet instead of arrow:

```python

from datasets import *

cache_dir = "s3://..."

build... | https://github.com/huggingface/datasets/pull/4724 | [

"_The documentation is not available anymore as the PR was closed or merged._",

"Added some docs for dask and took your comments into account\r\n\r\ncc @philschmid if you also want to take a look :)",

"Just noticed that it would be more convenient to pass the output dir to download_and_prepare directly, to bypa... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4724",

"html_url": "https://github.com/huggingface/datasets/pull/4724",

"diff_url": "https://github.com/huggingface/datasets/pull/4724.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4724.patch",

"merged_at": "2022-09-05T17:25... | 4,724 | true |

Refactor conftest fixtures | Previously, fixture modules `hub_fixtures` and `s3_fixtures`:

- were both at the root test directory

- were imported using `import *`

- as a side effect, the modules `os` and `pytest` were imported from `s3_fixtures` into `conftest`

This PR:

- puts both fixture modules in a dedicated directory `fixtures`

- re... | https://github.com/huggingface/datasets/pull/4723 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4723",

"html_url": "https://github.com/huggingface/datasets/pull/4723",

"diff_url": "https://github.com/huggingface/datasets/pull/4723.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4723.patch",

"merged_at": "2022-07-21T14:24... | 4,723 | true |

Docs: Fix same-page haslinks | `href="/docs/datasets/quickstart#audio"` implicitly goes to `href="/docs/datasets/{$LATEST_STABLE_VERSION}/quickstart#audio"`. Therefore, https://huggingface.co/docs/datasets/quickstart#audio #audio hashlink does not work since the new docs were not added to v2.3.2 (LATEST_STABLE_VERSION)

to preserve the version, it... | https://github.com/huggingface/datasets/pull/4722 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4722",

"html_url": "https://github.com/huggingface/datasets/pull/4722",

"diff_url": "https://github.com/huggingface/datasets/pull/4722.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4722.patch",

"merged_at": "2022-07-20T16:49... | 4,722 | true |

PyArrow Dataset error when calling `load_dataset` | ## Describe the bug

I am fine tuning a wav2vec2 model following the script here using my own dataset: https://github.com/huggingface/transformers/blob/main/examples/pytorch/speech-recognition/run_speech_recognition_ctc.py

Loading my Audio dataset from the hub which was originally generated from disk results in th... | https://github.com/huggingface/datasets/issues/4721 | [

"Hi ! It looks like a bug in `pyarrow`. If you manage to end up with only one chunk per parquet file it should workaround this issue.\r\n\r\nTo achieve that you can try to lower the value of `max_shard_size` and also don't use `map` before `push_to_hub`.\r\n\r\nDo you have a minimum reproducible example that we can... | null | 4,721 | false |

Dataset Viewer issue for shamikbose89/lancaster_newsbooks | ### Link

https://huggingface.co/datasets/shamikbose89/lancaster_newsbooks

### Description

Status code: 400

Exception: ValueError

Message: Cannot seek streaming HTTP file

I am able to use the dataset loading script locally and it also runs when I'm using the one from the hub, but the viewer sti... | https://github.com/huggingface/datasets/issues/4720 | [

"It seems like the list of splits could not be obtained:\r\n\r\n```python\r\n>>> from datasets import get_dataset_split_names\r\n>>> get_dataset_split_names(\"shamikbose89/lancaster_newsbooks\", \"default\")\r\nUsing custom data configuration default\r\nTraceback (most recent call last):\r\n File \"/home/slesage/h... | null | 4,720 | false |

Issue loading TheNoob3131/mosquito-data dataset |

So my dataset is public in the Huggingface Hub, but when I try to load it using the load_dataset command, it shows that it is downloading the files, but throws a ValueError. When I went to my directory to ... | https://github.com/huggingface/datasets/issues/4719 | [

"I am also getting a ValueError: 'Couldn't cast' at the bottom. Is this because of some delimiter issue? My dataset is on the Huggingface Hub. If you could look at it, that would be greatly appreciated.",

"Hi @thenerd31, thanks for reporting.\r\n\r\nPlease note that your issue is not caused by the Hugging Face Da... | null | 4,719 | false |

Make Extractor accept Path as input | This PR:

- Makes `Extractor` accept instance of `Path` as input

- Removes unnecessary castings of `Path` to `str` | https://github.com/huggingface/datasets/pull/4718 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4718",

"html_url": "https://github.com/huggingface/datasets/pull/4718",

"diff_url": "https://github.com/huggingface/datasets/pull/4718.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4718.patch",

"merged_at": "2022-07-22T13:29... | 4,718 | true |

Dataset Viewer issue for LawalAfeez/englishreview-ds-mini | ### Link

_No response_

### Description

Unable to view the split data

### Owner

_No response_ | https://github.com/huggingface/datasets/issues/4717 | [

"It's currently working, as far as I understand\r\n\r\nhttps://huggingface.co/datasets/LawalAfeez/englishreview-ds-mini/viewer/LawalAfeez--englishreview-ds-mini/train\r\n\r\n<img width=\"1556\" alt=\"Capture d’écran 2022-07-19 à 09 24 01\" src=\"https://user-images.githubusercontent.com/1676121/179761130-2d7980b9... | null | 4,717 | false |

Support "tags" yaml tag | Added the "tags" YAML tag, so that users can specify data domain/topics keywords for dataset search | https://github.com/huggingface/datasets/pull/4716 | [

"_The documentation is not available anymore as the PR was closed or merged._",

"IMO `DatasetMetadata` shouldn't crash with attributes that it doesn't know, btw",

"Yea this PR is mostly to have a validation that this field contains a list of strings.\r\n\r\nRegarding unknown fields, the tagging app currently re... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4716",

"html_url": "https://github.com/huggingface/datasets/pull/4716",

"diff_url": "https://github.com/huggingface/datasets/pull/4716.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4716.patch",

"merged_at": "2022-07-20T13:31... | 4,716 | true |

Fix POS tags | We're now using `part-of-speech` and not `part-of-speech-tagging`, see discussion here: https://github.com/huggingface/datasets/commit/114c09aff2fa1519597b46fbcd5a8e0c0d3ae020#r78794777 | https://github.com/huggingface/datasets/pull/4715 | [

"_The documentation is not available anymore as the PR was closed or merged._",

"CI failures are about missing content in the dataset cards or bad tags, and this is unrelated to this PR. Merging :)"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4715",

"html_url": "https://github.com/huggingface/datasets/pull/4715",

"diff_url": "https://github.com/huggingface/datasets/pull/4715.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4715.patch",

"merged_at": "2022-07-19T12:41... | 4,715 | true |

Fix named split sorting and remove unnecessary casting | This PR:

- makes `NamedSplit` sortable: so that `sorted()` can be called on them

- removes unnecessary `sorted()` on `dict.keys()`: `dict_keys` view is already like a `set`

- removes unnecessary casting of `NamedSplit` to `str` | https://github.com/huggingface/datasets/pull/4714 | [

"_The documentation is not available anymore as the PR was closed or merged._",

"hahaha what a timing, I added my comment right after you merged x)\r\n\r\nyou can ignore my (nit), it's fine",

"Sorry, just too sync... :sweat_smile: "

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4714",

"html_url": "https://github.com/huggingface/datasets/pull/4714",

"diff_url": "https://github.com/huggingface/datasets/pull/4714.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4714.patch",

"merged_at": "2022-07-22T09:10... | 4,714 | true |

Document installation of sox OS dependency for audio | The `sox` OS package needs being installed manually using the distribution package manager.

This PR adds this explanation to the docs. | https://github.com/huggingface/datasets/pull/4713 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4713",

"html_url": "https://github.com/huggingface/datasets/pull/4713",

"diff_url": "https://github.com/huggingface/datasets/pull/4713.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4713.patch",

"merged_at": "2022-07-21T08:04... | 4,713 | true |

Highlight non-commercial license in amazon_reviews_multi dataset card | Highlight that the licence granted by Amazon only covers non-commercial research use. | https://github.com/huggingface/datasets/pull/4712 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4712",

"html_url": "https://github.com/huggingface/datasets/pull/4712",

"diff_url": "https://github.com/huggingface/datasets/pull/4712.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4712.patch",

"merged_at": "2022-07-27T15:57... | 4,712 | true |

Document how to create a dataset loading script for audio/vision | Currently, in our docs for Audio/Vision/Text, we explain how to:

- Load data

- Process data

However we only explain how to *Create a dataset loading script* for text data.

I think it would be useful that we add the same for Audio/Vision as these have some specificities different from Text.

See, for example:

... | https://github.com/huggingface/datasets/issues/4711 | [] | null | 4,711 | false |

Add object detection processing tutorial | The following adds a quick guide on how to process object detection datasets with `albumentations`. | https://github.com/huggingface/datasets/pull/4710 | [

"_The documentation is not available anymore as the PR was closed or merged._",

"Great idea! Now that we have more than one task, it makes sense to separate image classification and object detection so it'll be easier for users to follow.",

"@lhoestq do we want to do that in this PR, or should we merge it and l... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4710",

"html_url": "https://github.com/huggingface/datasets/pull/4710",

"diff_url": "https://github.com/huggingface/datasets/pull/4710.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4710.patch",

"merged_at": "2022-07-21T19:56... | 4,710 | true |

WMT21 & WMT22 | ## Adding a Dataset

- **Name:** WMT21 & WMT22

- **Description:** We are going to have three tracks: two small tasks and a large task.

The small tracks evaluate translation between fairly related languages and English (all pairs). The large track uses 101 languages.

- **Paper:** /

- **Data:** https://statmt.org/wmt... | https://github.com/huggingface/datasets/issues/4709 | [

"Hi ! That would be awesome to have them indeed, thanks for opening this issue\r\n\r\nI just added you to the WMT org on the HF Hub if you're interested in adding those datasets.\r\n\r\nFeel free to create a dataset repository for each dataset and upload the data files there :) preferably in ZIP archives instead of... | null | 4,709 | false |

Fix require torchaudio and refactor test requirements | Currently there is a bug in `require_torchaudio` (indeed it is requiring `sox` instead):

```python

def require_torchaudio(test_case):

if find_spec("sox") is None:

...

```

The bug was introduced by:

- #3685

- Commit: https://github.com/huggingface/datasets/pull/3685/commits/b5a3e7122d49c4dcc9333b1d8d18a8... | https://github.com/huggingface/datasets/pull/4708 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4708",

"html_url": "https://github.com/huggingface/datasets/pull/4708",

"diff_url": "https://github.com/huggingface/datasets/pull/4708.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4708.patch",

"merged_at": "2022-07-22T06:18... | 4,708 | true |

Dataset Viewer issue for TheNoob3131/mosquito-data | ### Link

_No response_

### Description

Getting this error when trying to view dataset preview:

Message: 401, message='Unauthorized', url=URL('https://huggingface.co/datasets/TheNoob3131/mosquito-data/resolve/8aceebd6c4a359d216d10ef020868bd9e8c986dd/0_Africa_train.csv')

### Owner

_No response_ | https://github.com/huggingface/datasets/issues/4707 | [

"Thanks for reporting. I refreshed the dataset viewer and it now works as expected.\r\n\r\nhttps://huggingface.co/datasets/TheNoob3131/mosquito-data\r\n\r\n<img width=\"1135\" alt=\"Capture d’écran 2022-07-18 à 13 15 22\" src=\"https://user-images.githubusercontent.com/1676121/179566497-e47f1a27-fd84-4a8d-9d7f-2e... | null | 4,707 | false |

Fix empty examples in xtreme dataset for bucc18 config | As reported in https://huggingface.co/muibk, there are empty examples in xtreme/bucc18.de

I applied your fix @mustaszewski

I also used a dict to make the dataset generation much faster | https://github.com/huggingface/datasets/pull/4706 | [

"_The documentation is not available anymore as the PR was closed or merged._",

"I guess the report link is this instead: https://huggingface.co/datasets/xtreme/discussions/1"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4706",

"html_url": "https://github.com/huggingface/datasets/pull/4706",

"diff_url": "https://github.com/huggingface/datasets/pull/4706.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4706.patch",

"merged_at": "2022-07-19T06:29... | 4,706 | true |

Fix crd3 | As reported in https://huggingface.co/datasets/crd3/discussions/1#62cc377073b2512b81662794, each split of the dataset was containing the same data. This issues comes from a bug in the dataset script

I fixed it and also uploaded the data to hf.co to make the dataset work in streaming mode | https://github.com/huggingface/datasets/pull/4705 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4705",

"html_url": "https://github.com/huggingface/datasets/pull/4705",

"diff_url": "https://github.com/huggingface/datasets/pull/4705.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4705.patch",

"merged_at": "2022-07-21T17:06... | 4,705 | true |

Skip tests only for lz4/zstd params if not installed | Currently, if `zstandard` or `lz4` are not installed, `test_compression_filesystems` and `test_streaming_dl_manager_extract_all_supported_single_file_compression_types` are skipped for all compression format parameters.

This PR fixes these tests, so that if `zstandard` or `lz4` are not installed, the tests are skipp... | https://github.com/huggingface/datasets/pull/4704 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4704",

"html_url": "https://github.com/huggingface/datasets/pull/4704",

"diff_url": "https://github.com/huggingface/datasets/pull/4704.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4704.patch",

"merged_at": "2022-07-19T12:49... | 4,704 | true |

Make cast in `from_pandas` more robust | Make the cast in `from_pandas` more robust (as it was done for the packaged modules in https://github.com/huggingface/datasets/pull/4364)

This should be useful in situations like [this one](https://discuss.huggingface.co/t/loading-custom-audio-dataset-and-fine-tuning-model/8836/4). | https://github.com/huggingface/datasets/pull/4703 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4703",

"html_url": "https://github.com/huggingface/datasets/pull/4703",

"diff_url": "https://github.com/huggingface/datasets/pull/4703.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4703.patch",

"merged_at": "2022-07-22T11:05... | 4,703 | true |

Domain specific dataset discovery on the Hugging Face hub | **Is your feature request related to a problem? Please describe.**

## The problem

The datasets hub currently has `8,239` datasets. These datasets span a wide range of different modalities and tasks (currently with a bias towards textual data).

There are various ways of identifying datasets that may be releva... | https://github.com/huggingface/datasets/issues/4702 | [

"Hi! I added a link to this issue in our internal request for adding keywords/topics to the Hub, which is identical to the `topic tags` solution. The `collections` solution seems too complex (as you point out). Regarding the `domain tags` solution, we primarily focus on machine learning, so I'm not sure if it's a g... | null | 4,702 | false |

Added more information in the README about contributors of the Arabic Speech Corpus | Added more information in the README about contributors and encouraged reading the thesis for more infos | https://github.com/huggingface/datasets/pull/4701 | [] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4701",

"html_url": "https://github.com/huggingface/datasets/pull/4701",

"diff_url": "https://github.com/huggingface/datasets/pull/4701.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4701.patch",

"merged_at": "2022-07-28T10:33... | 4,701 | true |

Support extract lz4 compressed data files | null | https://github.com/huggingface/datasets/pull/4700 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4700",

"html_url": "https://github.com/huggingface/datasets/pull/4700",

"diff_url": "https://github.com/huggingface/datasets/pull/4700.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4700.patch",

"merged_at": "2022-07-18T14:31... | 4,700 | true |

Fix Authentification Error while streaming | I fixed a few errors when it occurs while streaming the private dataset on the Huggingface Hub.

```

from datasets import load_dataset

dataset = load_dataset(<repo_id>, use_auth_token=<private_token>, streaming=True)

for d in dataset['train']:

print(d)

break # this is for checking

```

This code is an e... | https://github.com/huggingface/datasets/pull/4699 | [

"Hi, thanks for working on this, but the fix for this has already been merged in https://github.com/huggingface/datasets/pull/4608."

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4699",

"html_url": "https://github.com/huggingface/datasets/pull/4699",

"diff_url": "https://github.com/huggingface/datasets/pull/4699.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4699.patch",

"merged_at": null

} | 4,699 | true |

Enable streaming dataset to use the "all" split | Fixes #4637 | https://github.com/huggingface/datasets/pull/4698 | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_4698). All of your documentation changes will be reflected on that endpoint.",

"@albertvillanova \r\nAdding the validation split causes these two `assert_called_once` assertions to fail with `AssertionError: Expected 'ArrowWrit... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4698",

"html_url": "https://github.com/huggingface/datasets/pull/4698",

"diff_url": "https://github.com/huggingface/datasets/pull/4698.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4698.patch",

"merged_at": null

} | 4,698 | true |

Trouble with streaming frgfm/imagenette vision dataset with TAR archive | ### Link

https://huggingface.co/datasets/frgfm/imagenette

### Description

Hello there :wave:

Thanks for the amazing work you've done with HF Datasets! I've just started playing with it, and managed to upload my first dataset. But for the second one, I'm having trouble with the preview since there is some archive... | https://github.com/huggingface/datasets/issues/4697 | [

"Hi @frgfm, thanks for reporting.\r\n\r\nAs the error message says, streaming mode is not supported out of the box when the dataset contains TAR archive files.\r\n\r\nTo make the dataset streamable, you have to use `dl_manager.iter_archive`.\r\n\r\nThere are several examples in other datasets, e.g. food101: https:/... | null | 4,697 | false |

Cannot load LinCE dataset | ## Describe the bug

Cannot load LinCE dataset due to a connection error

## Steps to reproduce the bug

```python

from datasets import load_dataset

dataset = load_dataset("lince", "ner_spaeng")

```

A notebook with this code and corresponding error can be found at https://colab.research.google.com/drive/1... | https://github.com/huggingface/datasets/issues/4696 | [

"Hi @finiteautomata, thanks for reporting.\r\n\r\nUnfortunately, I'm not able to reproduce your issue:\r\n```python\r\nIn [1]: from datasets import load_dataset\r\n ...: dataset = load_dataset(\"lince\", \"ner_spaeng\")\r\nDownloading builder script: 20.8kB [00:00, 9.09MB/s] ... | null | 4,696 | false |

Add MANtIS dataset | This PR adds MANtIS dataset.

Arxiv: [https://arxiv.org/abs/1912.04639](https://arxiv.org/abs/1912.04639)

Github: [https://github.com/Guzpenha/MANtIS](https://github.com/Guzpenha/MANtIS)

README and dataset tags are WIP. | https://github.com/huggingface/datasets/pull/4695 | [

"_The documentation is not available anymore as the PR was closed or merged._",

"Thanks for your contribution, @bhavitvyamalik. Are you still interested in adding this dataset?\r\n\r\nWe are removing the dataset scripts from this GitHub repo and moving them to the Hugging Face Hub: https://huggingface.co/datasets... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4695",

"html_url": "https://github.com/huggingface/datasets/pull/4695",

"diff_url": "https://github.com/huggingface/datasets/pull/4695.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4695.patch",

"merged_at": null

} | 4,695 | true |

Distributed data parallel training for streaming datasets | ### Feature request

Any documentations for the the `load_dataset(streaming=True)` for (multi-node multi-GPU) DDP training?

### Motivation

Given a bunch of data files, it is expected to split them onto different GPUs. Is there a guide or documentation?

### Your contribution

Does it requires manually spli... | https://github.com/huggingface/datasets/issues/4694 | [

"Hi ! According to https://huggingface.co/docs/datasets/use_with_pytorch#stream-data you can use the pytorch DataLoader with `num_workers>0` to distribute the shards across your workers (it uses `torch.utils.data.get_worker_info()` to get the worker ID and select the right subsets of shards to use)\r\n\r\n<s> EDIT:... | null | 4,694 | false |

update `samsum` script | update `samsum` script after #4672 was merged (citation is also updated) | https://github.com/huggingface/datasets/pull/4693 | [

"_The documentation is not available anymore as the PR was closed or merged._",

"We are closing PRs to dataset scripts because we are moving them to the Hub.\r\n\r\nThanks anyway.\r\n\r\n"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4693",

"html_url": "https://github.com/huggingface/datasets/pull/4693",

"diff_url": "https://github.com/huggingface/datasets/pull/4693.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4693.patch",

"merged_at": null

} | 4,693 | true |

Unable to cast a column with `Image()` by using the `cast_column()` feature | ## Describe the bug

A clear and concise description of what the bug is.

When I create a dataset, then add a column to the created dataset through the `dataset.add_column` feature and then try to cast a column of the dataset (this column contains image paths) with `Image()` by using the `cast_column()` feature, I ge... | https://github.com/huggingface/datasets/issues/4692 | [

"Hi, thanks for reporting! A PR (https://github.com/huggingface/datasets/pull/4614) has already been opened to address this issue."

] | null | 4,692 | false |

Dataset Viewer issue for rajistics/indian_food_images | ### Link

https://huggingface.co/datasets/rajistics/indian_food_images/viewer/rajistics--indian_food_images/train

### Description

I have a train/test split in my dataset

<img width="410" alt="Screen Shot 2022-07-15 at 11 44 42 AM" src="https://user-images.githubusercontent.com/6808012/179293215-7b419ec3-3527-46f2-8... | https://github.com/huggingface/datasets/issues/4691 | [

"Hi, thanks for reporting. I triggered a refresh of the preview for this dataset, and it works now. I'm not sure what occurred.\r\n<img width=\"1019\" alt=\"Capture d’écran 2022-07-18 à 11 01 52\" src=\"https://user-images.githubusercontent.com/1676121/179541327-f62ecd5e-a18a-4d91-b316-9e2ebde77a28.png\">\r\n\r\n... | null | 4,691 | false |

Refactor base extractors | This PR:

- Refactors base extractors as subclasses of `BaseExtractor`:

- this is an abstract class defining the interface with:

- `is_extractable`: abstract class method

- `extract`: abstract static method

- Implements abstract `MagicNumberBaseExtractor` (as subclass of `BaseExtractor`):

- this has a... | https://github.com/huggingface/datasets/pull/4690 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4690",

"html_url": "https://github.com/huggingface/datasets/pull/4690",

"diff_url": "https://github.com/huggingface/datasets/pull/4690.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4690.patch",

"merged_at": "2022-07-18T08:34... | 4,690 | true |

Test extractors for all compression formats | This PR:

- Adds all compression formats to `test_extractor`

- Tests each base extractor for all compression formats

Note that all compression formats are tested except "rar". | https://github.com/huggingface/datasets/pull/4689 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4689",

"html_url": "https://github.com/huggingface/datasets/pull/4689",

"diff_url": "https://github.com/huggingface/datasets/pull/4689.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4689.patch",

"merged_at": "2022-07-15T17:35... | 4,689 | true |

Skip test_extractor only for zstd param if zstandard not installed | Currently, if `zstandard` is not installed, `test_extractor` is skipped for all compression format parameters.

This PR fixes `test_extractor` so that if `zstandard` is not installed, `test_extractor` is skipped only for the `zstd` compression parameter, that is, it is not skipped for all the other compression parame... | https://github.com/huggingface/datasets/pull/4688 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4688",

"html_url": "https://github.com/huggingface/datasets/pull/4688",

"diff_url": "https://github.com/huggingface/datasets/pull/4688.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4688.patch",

"merged_at": "2022-07-15T15:15... | 4,688 | true |

Trigger CI also on push to main | Currently, new CI (on GitHub Actions) is only triggered on pull requests branches when the base branch is main.

This PR also triggers the CI when a PR is merged to main branch. | https://github.com/huggingface/datasets/pull/4687 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4687",

"html_url": "https://github.com/huggingface/datasets/pull/4687",

"diff_url": "https://github.com/huggingface/datasets/pull/4687.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4687.patch",

"merged_at": "2022-07-15T13:35... | 4,687 | true |

Align logging with Transformers (again) | Fix #2832 | https://github.com/huggingface/datasets/pull/4686 | [

"The docs for this PR live [here](https://moon-ci-docs.huggingface.co/docs/datasets/pr_4686). All of your documentation changes will be reflected on that endpoint.",

"I wasn't aware of https://github.com/huggingface/datasets/pull/1845 before opening this PR. This issue seems much more complex now ..."

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4686",

"html_url": "https://github.com/huggingface/datasets/pull/4686",

"diff_url": "https://github.com/huggingface/datasets/pull/4686.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4686.patch",

"merged_at": null

} | 4,686 | true |

Fix mock fsspec | This PR:

- Removes an unused method from `DummyTestFS`

- Refactors `mock_fsspec` to make it simpler | https://github.com/huggingface/datasets/pull/4685 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4685",

"html_url": "https://github.com/huggingface/datasets/pull/4685",

"diff_url": "https://github.com/huggingface/datasets/pull/4685.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4685.patch",

"merged_at": "2022-07-15T12:52... | 4,685 | true |

How to assign new values to Dataset? |

Hi, if I want to change some values of the dataset, or add new columns to it, how can I do it?

For example, I want to change all the labels of the SST2 dataset to `0`:

```python

from datasets import l... | https://github.com/huggingface/datasets/issues/4684 | [

"Hi! One option is use `map` with a function that overwrites the labels (`dset = dset.map(lamba _: {\"label\": 0}, features=dset.features`)). Or you can use the `remove_column` + `add_column` combination (`dset = dset.remove_columns(\"label\").add_column(\"label\", [0]*len(data)).cast(dset.features)`, but note that... | null | 4,684 | false |

Update create dataset card docs | This PR proposes removing the [online dataset card creator](https://huggingface.co/datasets/card-creator/) in favor of simply copy/pasting a template and using the [Datasets Tagger app](https://huggingface.co/spaces/huggingface/datasets-tagging) to generate the tags. The Tagger app provides more guidance by showing all... | https://github.com/huggingface/datasets/pull/4683 | [

"_The documentation is not available anymore as the PR was closed or merged._"

] | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4683",

"html_url": "https://github.com/huggingface/datasets/pull/4683",

"diff_url": "https://github.com/huggingface/datasets/pull/4683.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4683.patch",

"merged_at": "2022-07-18T13:24... | 4,683 | true |

weird issue/bug with columns (dataset iterable/stream mode) | I have a dataset online (CloverSearch/cc-news-mutlilingual) that has a bunch of columns, two of which are "score_title_maintext" and "score_title_description". the original files are jsonl formatted. I was trying to iterate through via streaming mode and grab all "score_title_description" values, but I kept getting key... | https://github.com/huggingface/datasets/issues/4682 | [] | null | 4,682 | false |

IndexError when loading ImageFolder | ## Describe the bug

Loading an image dataset with `imagefolder` throws `IndexError: list index out of range` when the given folder contains a non-image file (like a csv).

## Steps to reproduce the bug

Put a csv file in a folder with images and load it:

```python

import datasets

datasets.load_dataset("imagefold... | https://github.com/huggingface/datasets/issues/4681 | [

"Hi, thanks for reporting! If there are no examples in ImageFolder, the `label` column is of type `ClassLabel(names=[])`, which leads to an error in [this line](https://github.com/huggingface/datasets/blob/c15b391942764152f6060b59921b09cacc5f22a6/src/datasets/arrow_writer.py#L387) as `asdict(info)` calls `Features(... | null | 4,681 | false |

Dataset Viewer issue for codeparrot/xlcost-text-to-code | ### Link

https://huggingface.co/datasets/codeparrot/xlcost-text-to-code

### Description

Error

```

Server Error

Status code: 400

Exception: TypeError

Message: 'NoneType' object is not iterable

```

Before I did a minor change in the dataset script (removing some comments), the viewer was working but... | https://github.com/huggingface/datasets/issues/4680 | [

"There seems to be an issue with the `C++-snippet-level` config:\r\n\r\n```python\r\n>>> from datasets import get_dataset_split_names\r\n>>> get_dataset_split_names(\"codeparrot/xlcost-text-to-code\", \"C++-snippet-level\")\r\nTraceback (most recent call last):\r\n File \"/home/slesage/hf/datasets-server/services/... | null | 4,680 | false |

Added method to remove excess nesting in a DatasetDict | Added the ability for a DatasetDict to remove additional nested layers within its features to avoid conflicts when collating. It is meant to accompany [this PR](https://github.com/huggingface/transformers/pull/18119) to resolve the same issue [#15505](https://github.com/huggingface/transformers/issues/15505).

@stas0... | https://github.com/huggingface/datasets/pull/4679 | [

"Hi ! I think the issue you linked is closed and suggests to use `remove_columns`.\r\n\r\nMoreover if you end up with a dataset with an unnecessarily nested data, please modify your processing functions to not output nested data, or use `map(..., batched=True)` if you function take batches as input",

"Hi @lhoestq... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4679",

"html_url": "https://github.com/huggingface/datasets/pull/4679",

"diff_url": "https://github.com/huggingface/datasets/pull/4679.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4679.patch",

"merged_at": null

} | 4,679 | true |

Cant pass streaming dataset to dataloader after take() | ## Describe the bug

I am trying to pass a streaming version of c4 to a dataloader, but it can't be passed after I call `dataset.take(n)`. Some functions such as `shuffle()` can be applied without breaking the dataloader but not take.

## Steps to reproduce the bug

```python

import datasets

import torch

dset = ... | https://github.com/huggingface/datasets/issues/4678 | [

"Hi! Calling `take` on an iterable/streamable dataset makes it not possible to shard the dataset, which in turn disables multi-process loading (attempts to split the workload over the shards), so to go past this limitation, you can either use single-process loading in `DataLoader` (`num_workers=None`) or fetch the ... | null | 4,678 | false |

Random 400 Client Error when pushing dataset | ## Describe the bug

When pushing a dataset, the client errors randomly with `Bad Request for url:...`.

At the next call, a new parquet file is created for each shard.

The client may fail at any random shard.

## Steps to reproduce the bug

```python

dataset.push_to_hub("ORG/DATASET", private=True, branch="main")

... | https://github.com/huggingface/datasets/issues/4677 | [

"did you ever fix this? I'm experiencing the same",

"I am having the same issue. Even the simple example from the documentation gives me the 400 Error\r\n\r\n\r\n> from datasets import load_dataset\r\n> \r\n> dataset = load_dataset(\"stevhliu/demo\")\r\n> dataset.push_to_hub(\"processed_demo\")\r\n\r\n\r\n`reques... | null | 4,677 | false |

Dataset.map gets stuck on _cast_to_python_objects | ## Describe the bug

`Dataset.map`, when fed a Huggingface Tokenizer as its map func, can sometimes spend huge amounts of time doing casts. A minimal example follows.

Not all usages suffer from this. For example, I profiled the preprocessor at https://github.com/huggingface/notebooks/blob/main/examples/question_an... | https://github.com/huggingface/datasets/issues/4676 | [

"Are you able to reproduce this? My example is small enough that it should be easy to try.",

"Hi! Thanks for reporting and providing a reproducible example. Indeed, by default, `datasets` performs an expensive cast on the values returned by `map` to convert them to one of the types supported by PyArrow (the under... | null | 4,676 | false |

Unable to use dataset with PyTorch dataloader | ## Describe the bug

When using `.with_format("torch")`, an arrow table is returned and I am unable to use it by passing it to a PyTorch DataLoader: please see the code below.

## Steps to reproduce the bug

```python

from datasets import load_dataset

from torch.utils.data import DataLoader

ds = load_dataset(

... | https://github.com/huggingface/datasets/issues/4675 | [

"Hi! `para_crawl` has a single column of type `Translation`, which stores translation dictionaries. These dictionaries can be stored in a NumPy array but not in a PyTorch tensor since PyTorch only supports numeric types. In `datasets`, the conversion to `torch` works as follows: \r\n1. convert PyArrow table to NumP... | null | 4,675 | false |

Issue loading datasets -- pyarrow.lib has no attribute | ## Describe the bug

I am trying to load sentiment analysis datasets from huggingface, but any dataset I try to use via load_dataset, I get the same error:

`AttributeError: module 'pyarrow.lib' has no attribute 'IpcReadOptions'`

## Steps to reproduce the bug

```python

dataset = load_dataset("glue", "cola")

```

... | https://github.com/huggingface/datasets/issues/4674 | [

"Hi @margotwagner, thanks for reporting.\r\n\r\nUnfortunately, I'm not able to reproduce your bug: in an environment with datasets-2.3.2 and pyarrow-8.0.0, I can load the datasets without any problem:\r\n```python\r\n>>> ds = load_dataset(\"glue\", \"cola\")\r\n>>> ds\r\nDatasetDict({\r\n train: Dataset({\r\n ... | null | 4,674 | false |

load_datasets on csv returns everything as a string | ## Describe the bug

If you use:

`conll_dataset.to_csv("ner_conll.csv")`

It will create a csv file with all of your data as expected, however when you load it with:

`conll_dataset = load_dataset("csv", data_files="ner_conll.csv")`

everything is read in as a string. For example if I look at everything in 'n... | https://github.com/huggingface/datasets/issues/4673 | [

"Hi @courtneysprouse, thanks for reporting.\r\n\r\nYes, you are right: by default the \"csv\" loader loads all columns as strings. \r\n\r\nYou could tweak this behavior by passing the `feature` argument to `load_dataset`, but it is also true that currently it is not possible to perform some kind of casts, due to la... | null | 4,673 | false |

Support extract 7-zip compressed data files | Fix partially #3541, fix #4670. | https://github.com/huggingface/datasets/pull/4672 | [

"_The documentation is not available anymore as the PR was closed or merged._",

"Cool! Can you please remove `Fix #3541` from the description as this PR doesn't add support for streaming/`iter_archive`, so it only partially addresses the issue?\r\n\r\nSide note:\r\nI think we can use `libarchive` (`libarchive-c` ... | {

"url": "https://api.github.com/repos/huggingface/datasets/pulls/4672",

"html_url": "https://github.com/huggingface/datasets/pull/4672",

"diff_url": "https://github.com/huggingface/datasets/pull/4672.diff",

"patch_url": "https://github.com/huggingface/datasets/pull/4672.patch",

"merged_at": "2022-07-15T13:02... | 4,672 | true |

Dataset Viewer issue for wmt16 | ### Link

https://huggingface.co/datasets/wmt16

### Description

[Reported](https://huggingface.co/spaces/autoevaluate/model-evaluator/discussions/12#62cb83f14c7f35284e796f9c) by a user of AutoTrain Evaluate. AFAIK this dataset was working 1-2 weeks ago, and I'm not sure how to interpret this error.

```

Status cod... | https://github.com/huggingface/datasets/issues/4671 | [

"Thanks for reporting, @lewtun.\r\n\r\n~We can't load the dataset locally, so I think this is an issue with the loading script (not the viewer).~\r\n\r\n We are investigating...",

"Recently, there was a merged PR related to this dataset:\r\n- #4554\r\n\r\nWe are looking at this...",

"Indeed, the above mentioned... | null | 4,671 | false |

Can't extract files from `.7z` zipfile using `download_and_extract` | ## Describe the bug

I'm adding a new dataset which is a `.7z` zip file in Google drive and contains 3 json files inside. I'm able to download the data files using `download_and_extract` but after downloading it throws this error:

```

>>> dataset = load_dataset("./datasets/mantis/")

Using custom data configuration d... | https://github.com/huggingface/datasets/issues/4670 | [

"Hi @bhavitvyamalik, thanks for reporting.\r\n\r\nYes, currently we do not support 7zip archive compression: I think we should.\r\n\r\nAs a workaround, you could uncompress it explicitly, like done in e.g. `samsum` dataset: \r\n\r\nhttps://github.com/huggingface/datasets/blob/fedf891a08bfc77041d575fad6c26091bc0fce5... | null | 4,670 | false |

loading oscar-corpus/OSCAR-2201 raises an error | ## Describe the bug

load_dataset('oscar-2201', 'af')

raises an error:

Traceback (most recent call last):

File "/usr/lib/python3.8/code.py", line 90, in runcode

exec(code, self.locals)

File "<input>", line 1, in <module>

File "..python3.8/site-packages/datasets/load.py", line 1656, in load_dataset

... | https://github.com/huggingface/datasets/issues/4669 | [

"I had to use the appropriate token for use_auth_token. Thank you."

] | null | 4,669 | false |

Dataset Viewer issue for hungnm/multilingual-amazon-review-sentiment-processed | ### Link

https://huggingface.co/hungnm/multilingual-amazon-review-sentiment

### Description

_No response_

### Owner

Yes | https://github.com/huggingface/datasets/issues/4668 | [

"It seems like a private dataset. The viewer is currently not supported on the private datasets."

] | null | 4,668 | false |

Dataset Viewer issue for hungnm/multilingual-amazon-review-sentiment-processed | ### Link

_No response_

### Description

_No response_

### Owner

_No response_ | https://github.com/huggingface/datasets/issues/4667 | [] | null | 4,667 | false |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.