Instructions to use LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF with libraries, inference providers, notebooks, and local apps. Follow these links to get started.

- Libraries

- llama-cpp-python

How to use LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF with llama-cpp-python:

# !pip install llama-cpp-python from llama_cpp import Llama llm = Llama.from_pretrained( repo_id="LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF", filename="OpenCodeInterpreter-DS-6.7B-Q3_K_L.gguf", )

llm.create_chat_completion( messages = [ { "role": "user", "content": "What is the capital of France?" } ] ) - Notebooks

- Google Colab

- Kaggle

- Local Apps

- llama.cpp

How to use LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF with llama.cpp:

Install from brew

brew install llama.cpp # Start a local OpenAI-compatible server with a web UI: llama-server -hf LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF:Q4_K_M # Run inference directly in the terminal: llama-cli -hf LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF:Q4_K_M

Install from WinGet (Windows)

winget install llama.cpp # Start a local OpenAI-compatible server with a web UI: llama-server -hf LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF:Q4_K_M # Run inference directly in the terminal: llama-cli -hf LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF:Q4_K_M

Use pre-built binary

# Download pre-built binary from: # https://github.com/ggerganov/llama.cpp/releases # Start a local OpenAI-compatible server with a web UI: ./llama-server -hf LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF:Q4_K_M # Run inference directly in the terminal: ./llama-cli -hf LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF:Q4_K_M

Build from source code

git clone https://github.com/ggerganov/llama.cpp.git cd llama.cpp cmake -B build cmake --build build -j --target llama-server llama-cli # Start a local OpenAI-compatible server with a web UI: ./build/bin/llama-server -hf LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF:Q4_K_M # Run inference directly in the terminal: ./build/bin/llama-cli -hf LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF:Q4_K_M

Use Docker

docker model run hf.co/LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF:Q4_K_M

- LM Studio

- Jan

- vLLM

How to use LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF with vLLM:

Install from pip and serve model

# Install vLLM from pip: pip install vllm # Start the vLLM server: vllm serve "LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF" # Call the server using curl (OpenAI-compatible API): curl -X POST "http://localhost:8000/v1/chat/completions" \ -H "Content-Type: application/json" \ --data '{ "model": "LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF", "messages": [ { "role": "user", "content": "What is the capital of France?" } ] }'Use Docker

docker model run hf.co/LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF:Q4_K_M

- Ollama

How to use LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF with Ollama:

ollama run hf.co/LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF:Q4_K_M

- Unsloth Studio new

How to use LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF with Unsloth Studio:

Install Unsloth Studio (macOS, Linux, WSL)

curl -fsSL https://unsloth.ai/install.sh | sh # Run unsloth studio unsloth studio -H 0.0.0.0 -p 8888 # Then open http://localhost:8888 in your browser # Search for LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF to start chatting

Install Unsloth Studio (Windows)

irm https://unsloth.ai/install.ps1 | iex # Run unsloth studio unsloth studio -H 0.0.0.0 -p 8888 # Then open http://localhost:8888 in your browser # Search for LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF to start chatting

Using HuggingFace Spaces for Unsloth

# No setup required # Open https://huggingface.co/spaces/unsloth/studio in your browser # Search for LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF to start chatting

- Docker Model Runner

How to use LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF with Docker Model Runner:

docker model run hf.co/LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF:Q4_K_M

- Lemonade

How to use LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF with Lemonade:

Pull the model

# Download Lemonade from https://lemonade-server.ai/ lemonade pull LoneStriker/OpenCodeInterpreter-DS-6.7B-GGUF:Q4_K_M

Run and chat with the model

lemonade run user.OpenCodeInterpreter-DS-6.7B-GGUF-Q4_K_M

List all available models

lemonade list

OpenCodeInterpreter: Integrating Code Generation with Execution and Refinement

Introduction

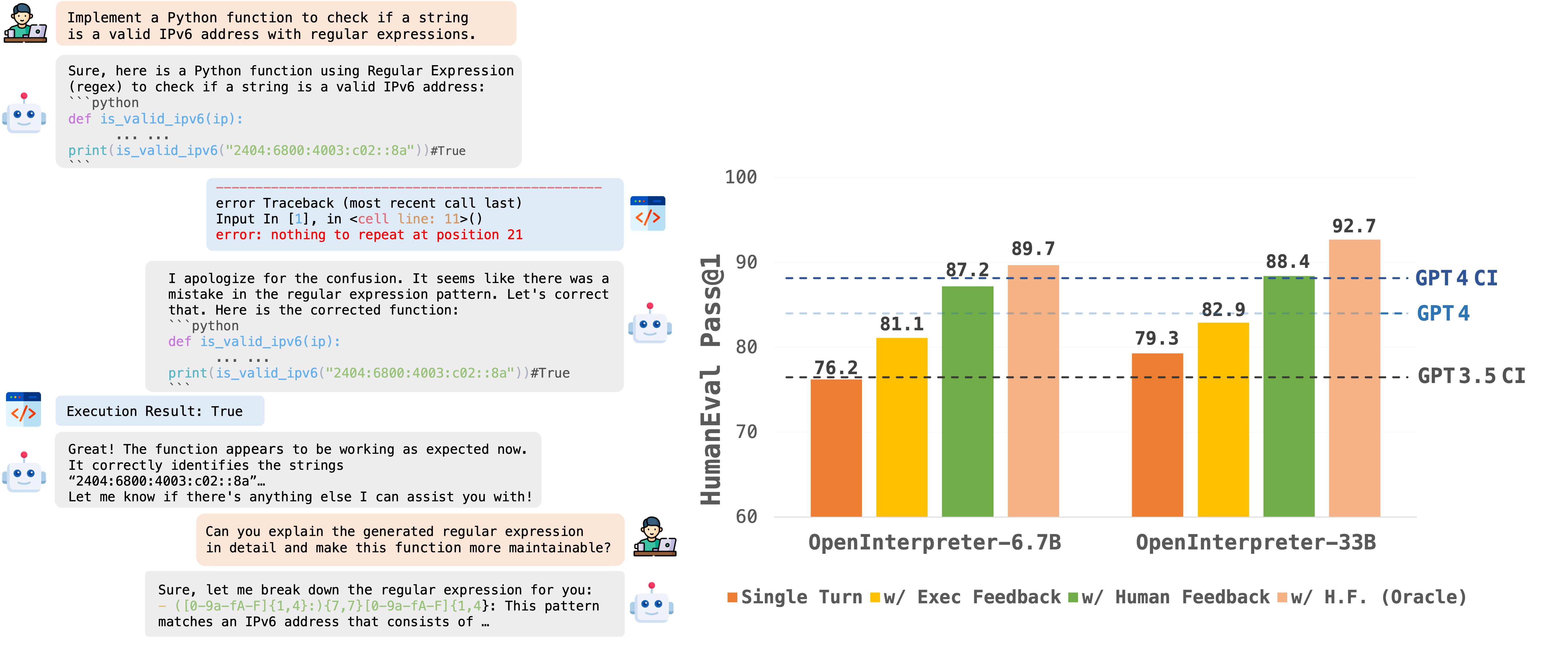

OpenCodeInterpreter is a family of open-source code generation systems designed to bridge the gap between large language models and advanced proprietary systems like the GPT-4 Code Interpreter. It significantly advances code generation capabilities by integrating execution and iterative refinement functionalities.

For further information and related work, refer to our paper: "OpenCodeInterpreter: A System for Enhanced Code Generation and Execution" available on arXiv.

Model Usage

Inference

import torch

from transformers import AutoTokenizer, AutoModelForCausalLM

model_path="OpenCodeInterpreter-DS-6.7B"

tokenizer = AutoTokenizer.from_pretrained(model_path)

model = AutoModelForCausalLM.from_pretrained(

model_path,

torch_dtype=torch.bfloat16,

device_map="auto",

)

model.eval()

prompt = "Write a function to find the shared elements from the given two lists."

inputs = tokenizer.apply_chat_template(

[{'role': 'user', 'content': prompt }],

return_tensors="pt"

).to(model.device)

outputs = model.generate(

inputs,

max_new_tokens=1024,

do_sample=False,

pad_token_id=tokenizer.eos_token_id,

eos_token_id=tokenizer.eos_token_id,

)

print(tokenizer.decode(outputs[0][len(inputs[0]):], skip_special_tokens=True))

Contact

If you have any inquiries, please feel free to raise an issue or reach out to us via email at: xiangyue.work@gmail.com, zhengtianyu0428@gmail.com. We're here to assist you!"

- Downloads last month

- 97

3-bit

4-bit

5-bit

6-bit

8-bit